AI can resurrect the dead, but should it?

The line between memorial and manipulation is thinner than you think.

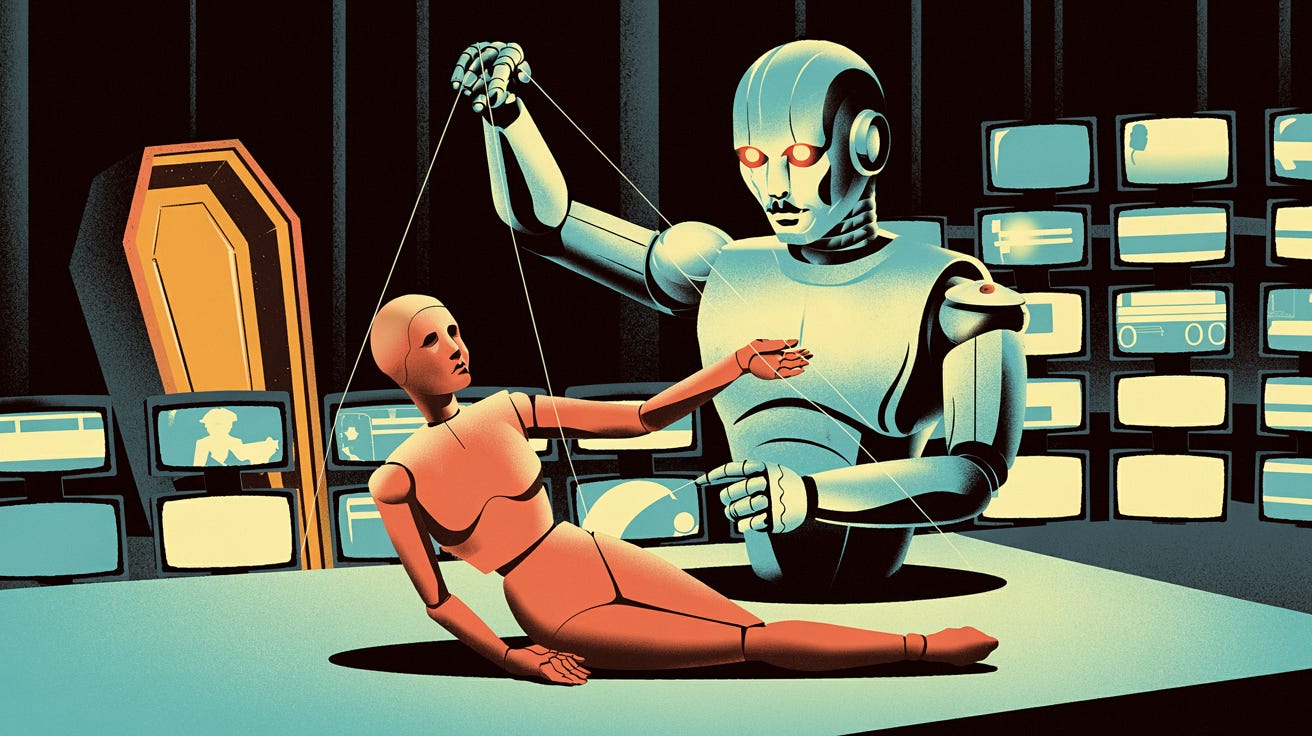

Popular AI readers understand better than most that technology reveals human nature. We build mirrors before we build tools. But when former CNN anchor Jim Acosta “interviewed” an AI-generated avatar of Joaquin Oliver, a teenager murdered in the 2018 Parkland shooting, he didn’t just hold up a mirror. He staged a grotesque puppet show where grief became a prop and algorithms spat pre-programmed activism.

Consider the mechanics first. This avatar wasn’t some sentient digital ghost. It was a stitched-together deepfake trained on Oliver’s photos and voice, programmed to parrot gun control talking points in what observers called a “hurried monotone without inflection.” The result? A jerky, unnatural simulacrum whose “movements of its face and mouth were jerky and unnatural, looking more like a dub-over than an actual person talking.”

Acosta didn’t stumble into this dystopian theater by accident. He promoted it as a “one of a kind interview,” framing it as a breakthrough. Yet when he asked the avatar, “What happened to you?”, the reply felt lifted from a lobbyist’s press release: “I was taken from this world too soon due to gun violence while at school. It’s important to talk about these issues so we can create a safer future for everyone.” No nuance. No humanity. Just recycled activism from an AI-resurrected corpse.

Oliver’s parents claim ownership of this digital seance, insisting they merely want to hear their son’s voice again. But their partnership with Acosta, a partisan journalist who gushed, “I really felt like I was speaking with Joaquin. It’s just a beautiful thing” exposes the agenda. As Manuel Oliver admitted, they plan to deploy this avatar politically: “Joaquin is going to start having followers… He’s going to start uploading videos. This is just the beginning.” Imagine it. A dead teen weaponized as an eternal advocate, never aging, never questioning, forever trapped in a loop of activism.

Critics blasted the stunt as “creepy”, “weird” and “unsettling,” with one branding Acosta an “opportunistic ghoul.” They’re right. This isn’t about healing. It’s about leveraging a tragedy to bypass living voices. Parkland survivors like David Hogg already champion gun control. Why resurrect Oliver? Because corpses don’t deviate from the script.

The technical failures here are almost poetic. When Acosta asked about solutions to gun violence, the avatar recited platitudes about “stronger gun control laws” and “community engagement.” Not a flicker of Oliver’s reported love for writing or Valentine’s Day flowers. Just ideological bullet points straight out of an anti-gun rights activist’s fever dream.

True AI innovation respects the dead by preserving memory, not fabricating mouthpieces. This charade was a clear example of how not to do this. Possibly, this one critic said it best:

“You’re interviewing ChatGPT, not Joaquin Oliver. Don’t piss on my leg and tell me it’s raining.”

We’re left with a clear lesson: AI, like any tool, is neither inherently good nor evil. It reflects the intentions of those who wield it. The same technology that can preserve memories, restore voices for grieving families, or create educational experiences can also be twisted into a political puppet show.

Let this be a case study in what happens when ethics take a backseat to propaganda.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing