The best CPU for running local LLMs: top AMD vs Intel processors ranked

The best CPU for running local LLMs depends on RAM, PCIe lanes, and platform limits. Here are the top chips ranked for real builds.

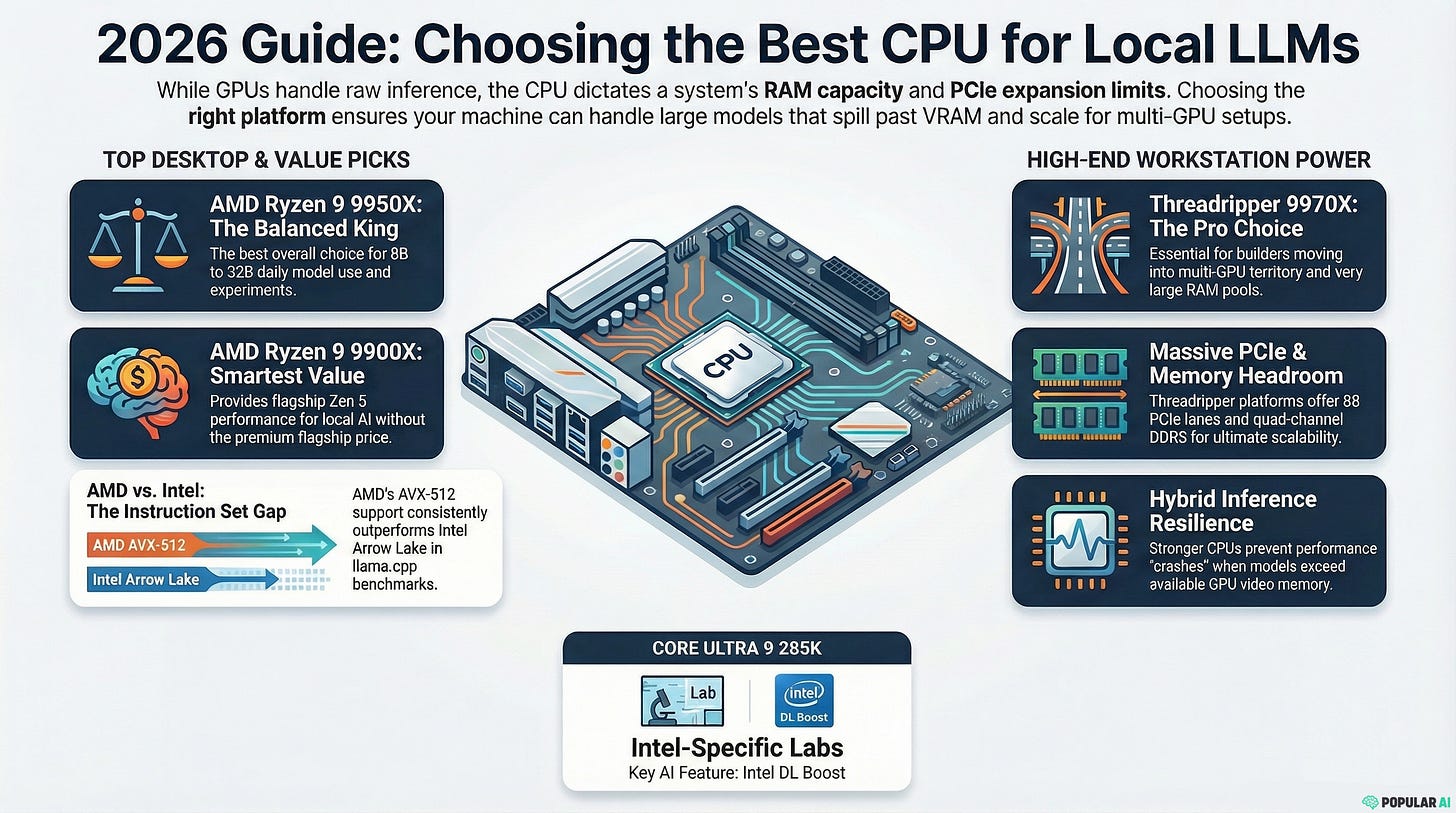

If you care about running local LLMs without being boxed in by API limits, feature removals, or policy changes, CPU choice still matters. The GPU still does most of the heavy lifting in a sensible local AI build, but the CPU decides how much system RAM you can realistically use, how well your machine handles models that spill past VRAM, and how far the platform can scale once you move beyond hobby experiments.

That tradeoff shows up clearly in llama.cpp, which supports CPU and GPU hybrid inference for models that do not fully fit in VRAM. In the same 8B LocalScore test, an RTX 3060 run delivered 53.0 tokens per second for generation and 1,506 prompt tokens per second, while a Ryzen 9 9950X CPU-only run posted 11.5 tokens per second for generation and 122 prompt tokens per second. The point is simple. GPU horsepower still matters more once the model fits, but the CPU shapes the experience when your local setup starts pushing into larger models, bigger contexts, and heavier multitasking.

For most buyers, the real question is not whether the CPU beats the GPU. It is which processor removes the biggest bottlenecks once memory capacity, PCIe expansion, and hybrid inference start to matter.

More on local AI hardware

Why the platform matters more than many people think

On consumer AM5, AMD’s Ryzen 9000 chips top out at dual-channel DDR5, 256GB of memory support, and 24 usable PCIe lanes. Move up to AMD’s Threadripper 9000 platform and you get quad-channel DDR5-6400 RDIMM, ECC enabled by default, and 88 usable PCIe 5.0 lanes. That jump is what separates a strong desktop from a real local AI workstation.

That difference matters more in local LLM use than it does in ordinary desktop buying. A gaming-first CPU can look great on a spec sheet and still leave you boxed in once you want more RAM, more storage, or multiple GPUs. For local inference, the platform often matters as much as the core count.

AMD also has the strongest overall argument for this workload right now. llama.cpp supports AVX, AVX2, AVX-512, AMX, and CPU plus GPU hybrid inference. In the benchmarks cited in the source material, AMD’s Zen 5 parts consistently came out ahead of Intel’s Core Ultra 9 285K in broad Linux testing and in llama.cpp, with AVX-512 support helping AMD’s desktop chips stand out.

Quick answers

Best overall for most readers: AMD Ryzen 9 9950X.

Best serious workstation CPU: AMD Ryzen Threadripper 9970X.

Best value pick: AMD Ryzen 9 9900X.

Fastest raw option: AMD Ryzen Threadripper 9980X.

The ranked list

1. AMD Ryzen 9 9950X

This is the cleanest answer for most people building a serious local LLM machine. The Ryzen 9 9950X gives you 16 cores, 32 threads, AVX-512 support, a 170W TDP, socket AM5, dual-channel DDR5, up to 256GB of memory support, and 24 usable PCIe lanes. In the benchmark material referenced in the article, it also outpaced Intel’s 285K in a broad Linux comparison and stayed strong in llama.cpp workloads.

What makes the 9950X so compelling is balance. It is fast enough to support serious single-GPU local AI work, strong enough to keep hybrid inference from feeling cramped, and flexible enough to anchor a one-box build that can handle everyday 8B to 32B use with room for 70B experiments. It does not have Threadripper’s memory channels or expansion headroom, but it gives most readers the best mix of speed, platform maturity, and sane cost.

Best for: 8B to 32B daily local use, hybrid 70B experiments, single-GPU rigs, local agents, and anyone who wants the best non-Threadripper choice.

Amazon: AMD Ryzen 9 9950X

2. AMD Ryzen Threadripper 9970X

The Ryzen Threadripper 9970X is where the conversation shifts from fast desktop CPU to true workstation platform. You get 32 cores, 64 threads, quad-channel DDR5-6400 RDIMM, ECC enabled by default, and 88 usable PCIe 5.0 lanes. That matters if your local AI box is heading toward multi-GPU territory, very large RAM pools, or long-term upgrade plans.

This chip ranks above the 9980X for most serious buyers because it gives you the same platform advantages without pushing the price into a far less rational zone. In the benchmark material cited in the article, it also posted wins in several llama.cpp prompt-processing and text-generation tests. For builders who want a workstation that can grow into a local API server, bigger hybrid models, or a multi-user lab box, this is the strongest high-end recommendation on the list.

Best for: 256GB-plus RAM builds, multi-GPU rigs, local API servers, batching, 32B to 70B hybrid work, and builders who want room to grow.

Amazon: AMD Ryzen Threadripper 9970X

3. AMD Ryzen 9 9900X

The Ryzen 9 9900X is the smartest value pick for readers who want real Zen 5 local AI performance without paying flagship prices. It gives you 12 cores, 24 threads, AVX-512, a 120W TDP, AM5, dual-channel DDR5, up to 256GB of memory support, and 24 usable PCIe lanes. In the benchmark comparisons cited in the source material, it still came in ahead of Intel’s Core Ultra 9 285K overall.

That is why the 9900X works so well in this ranking. It does not try to be the ultimate chip. It gives you the pieces that matter for local LLM work, strong CPU-side behavior, enough cores for development and background tasks, and an AM5 platform that still scales into a serious desktop build. If the 9950X is the best overall answer, the 9900X is the answer for buyers who care about value without giving up the core advantages of AMD’s current desktop stack.

Best for: 8B to 14B daily use, lean 32B hybrid builds, dev workstations, and readers who want Zen 5 local AI performance without flagship spending.

Amazon: AMD Ryzen 9 9900X

4. AMD Ryzen Threadripper 9960X

The Ryzen Threadripper 9960X is the least expensive way onto Threadripper while still getting the platform benefits that matter for local LLM work. It brings 24 cores, 48 threads, quad-channel DDR5-6400 RDIMM, ECC, 88 usable PCIe lanes, and a 350W TDP. For many builders, that is enough to justify the jump even before raw CPU speed becomes the main story.

This is the Threadripper for people buying the platform first. If you need bigger RAM pools, more storage, extra networking, or a roadmap toward multiple GPUs, the 9960X makes far more sense than stretching a consumer board past what it was built to do. It is the entry point for a proper workstation build, and for local RAG servers or homelab deployments that need more capacity than glamour, that matters a lot.

Best for: 192GB to 384GB memory builds, local RAG servers, multi-drive and multi-GPU homelabs, and anyone buying Threadripper for platform reasons first.

Amazon: AMD Ryzen Threadripper 9960X

5. AMD Ryzen Threadripper 9980X

If you care about raw CPU-side headroom more than value, the Ryzen Threadripper 9980X is the monster on this list. You get 64 cores, 128 threads, 256MB of L3 cache, quad-channel DDR5-6400 RDIMM, ECC, and 88 usable PCIe lanes. In the benchmark material referenced in the article, it led many LocalScore and llama.cpp tests, especially on heavier CPU-oriented workloads.

It ranks fifth because this list is about what people should buy, not what wins the most extravagant spec contest. The 9970X can be the smarter workstation purchase for far less money, and some benchmark slices still favored the cheaper chip. Even so, if your budget is effectively open and you want the heaviest CPU-first local LLM workstation you can reasonably build on a desktop platform, this is the top-end answer.

Best for: CPU-only monster builds, giant contexts, multi-user local serving, and builders who care about maximum headroom more than value.

Amazon: AMD Ryzen Threadripper 9980X

6. AMD Ryzen 9 9950X3D

The Ryzen 9 9950X3D is the luxury pick for buyers who want one machine to handle both top-tier gaming and local AI. It keeps 16 cores, 32 threads, AM5, AVX-512, and a 170W TDP, while adding 128MB of L3 cache. In the benchmark material referenced in the article, it edged out the plain 9950X overall and stayed well ahead of Intel’s 285K.

For an LLM-first buyer, though, the extra spend is harder to justify. The added cache helps gaming more than it changes the platform limits that matter most for local inference. You are still on dual-channel AM5, still capped by consumer-class expansion, and still not getting the kind of memory headroom that makes Threadripper special. That is why it belongs below the plain 9950X in a ranking focused on local LLM work, even if it is the more tempting flex build.

Best for: one-box gaming plus local AI builds where you want no compromise on the gaming side.

Amazon: AMD Ryzen 9 9950X3D

7. Intel Core Ultra 9 285K

The Intel Core Ultra 9 285K is the Intel pick if you already know you want to stay on Intel. It offers 24 cores, 24 threads, 125W base power, 250W max turbo power, dual-channel DDR5-6400, 256GB max memory support, and Intel DL Boost. On paper, that is a serious desktop chip.

The problem is that AMD’s Zen 5 parts, according to the benchmark comparisons cited in the article, kept beating it in broad Linux testing and in llama.cpp, where Arrow Lake’s lack of AVX-512 hurt its standing. That does not make the 285K a bad processor. It makes it a harder recommendation for this specific workload. It is defensible for Intel-leaning buyers or OpenVINO-heavy experimentation, but it is not the best CPU for local LLMs right now based on the source material.

Best for: Intel-leaning buyers, OpenVINO-heavy experimentation, or mixed workloads where you have another reason to stay on Intel.

Amazon: Intel Core Ultra 9 285K

8. AMD Ryzen 7 9700X

The Ryzen 7 9700X is the efficient entry point on this list. It is an 8-core, 16-thread, 65W Zen 5 CPU that makes sense in a smaller local AI box where the GPU is doing most of the real model work. It is not going to make CPU-only 70B inference feel good, and it is not supposed to.

What it does offer is a credible starting point for 7B to 14B workloads, lightweight agents, quieter desktops, and energy-conscious builds that still want modern AMD features. If your goal is a compact single-GPU system that runs small local models well and stays civilized on power draw, the 9700X is an easy chip to understand and justify.

Best for: 7B to 14B work, starter local AI desktops, homelab utility nodes, and efficient single-GPU builds.

Amazon: AMD Ryzen 7 9700X

What to buy for each type of builder

If you want one direct answer and you care about the best mix of performance, flexibility, and cost, buy the Ryzen 9 9950X. It is the processor that makes the fewest compromises for most serious local AI desktops.

If you are building around very large RAM pools, more than one GPU, or a workstation that needs room to grow for years, buy the Threadripper 9970X. It is the most sensible high-end platform choice on the board.

If you want the strongest value in a serious desktop chip, buy the Ryzen 9 9900X. It gives up some top-end speed, but it keeps the platform and instruction set advantages that matter for local LLM work.

If you want the fastest raw CPU on the list and cost barely enters the discussion, buy the Threadripper 9980X.

If the machine also needs to double as a premium gaming box, buy the Ryzen 9 9950X3D.

The bottom line

The best CPU for running local LLMs is usually the one that removes memory and expansion bottlenecks without wasting money on the wrong kind of halo. For most people, that CPU is the AMD Ryzen 9 9950X. For serious workstation builds, it is the AMD Ryzen Threadripper 9970X. For value-conscious buyers who still want a real local AI desktop, it is the AMD Ryzen 9 9900X.

The GPU still decides a huge part of the experience once the model fits. The CPU decides how painful things get once it does not. That is the difference between a pleasant local setup and a machine that constantly pushes you back toward the cloud. For the benchmark result referenced here, see the cited LocalScore test.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing

The best CPU for running local LLMs is still a bigger question than many people think because RAM capacity, PCIe lanes, and platform longevity can matter as much as raw benchmark numbers. In your own local AI builds, what ends up mattering most after the first week: efficiency, upgrade path, memory ceiling, hybrid inference performance, or price?