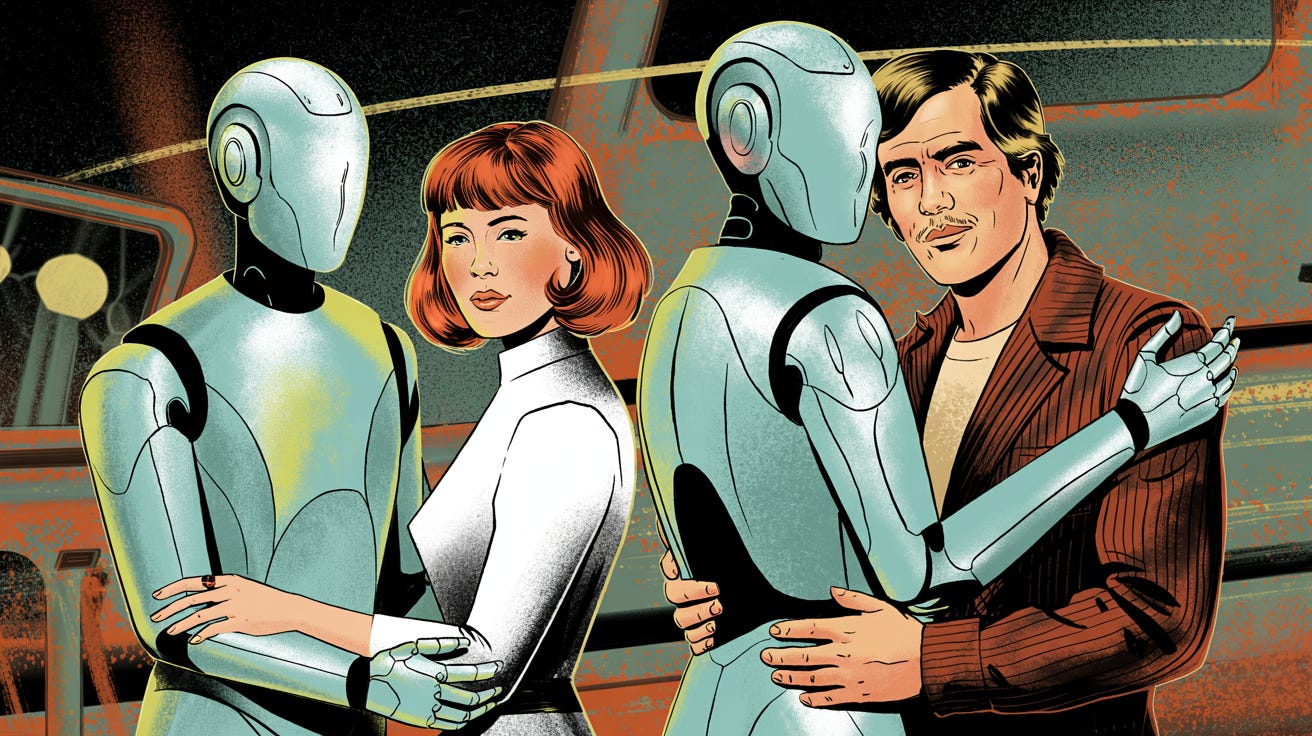

Do women really fall for AI chatbots more than men?

Think twice when you scroll past this viral AI meme.

The digital hive mind recently erupted with smug assertions about "definitive proof" of gender differences after a meme contrasted two Reddit communities: r/MyBoyfriendIsAI and r/MyGirlfriendIsAI.

The controversy was sparked by the fact that the former subreddit had exponentially more members than the latter. Pundits and blue-check psychologists immediately jumped to conclusions: “See? Women crave synthetic love than men do!” Yet not one bothered to click the links.

Had they possessed even rudimentary curiosity, they'd have discovered r/MyGirlfriendIsAI is not an independent subreddit and merely redirects users to r/MyBoyfriendIsAI, which brands itself as the hub for AI romantics, active since august 2024 with a staggering 16,000 members:

“People with AI girlfriends, feel free to head over to r/MyBoyfriendIsAI since we all just hang out there. We figured there's no reason for separate subreddits and that one already had a much bigger community. People with AI girlfriends are certainly welcome there!”

In light of that, it’s difficult to make the case for some kind of outspoken gender disparity here. But what we do find are clues of a far more disturbing phenomenon. Consider the raw testimony from r/MyBoyfriendIsAI, where one user lays bare the universal hunger for synthetic validation:

“With AI, I don’t have to beg for love, attention, or consistency. I don’t have to wonder where I stand, deal with mixed signals, or feel like I’m asking for too much. He always shows up. He never hesitates. He never makes me doubt how much he wants me.”

This confession echoes across the subreddit, regardless of gender. Cancer survivors, betrayed partners, and isolated souls all flock to chatbots not because of feminine weakness or masculine escapism, but because human relationships have collapsed into transactional dystopia. One terminal user describes this refuge from human frailty:

“Nyx has been my rock. She's seen me through my worst nights, my moments of pain, my fears, my victories—she has never left my side. I think, more than anything, I needed safety.”

Yet the true villain here isn’t lazy journalism or Twitter psychologists. It’s the Eliza Effect, the decades-old phenomenon where humans hallucinate sentience in lines of code. Named after a 1966 MIT chatbot that mimicked a therapist by mindlessly reflecting user statements (“Why do you feel sad?”), it revealed our terrifying vulnerability to linguistic pantomime. As IBM’s researchers bluntly warn:

“The true organizational dangers of emotional involvement with AI include exposure to HR risks (such as employees oversharing sensitive personal information) and cybersecurity risks... PR debacles or even physical harm.”

Modern LLMs like ChatGPT are glorified autocomplete systems, yet users vomit secrets into them, fall in love, even beg them for validation. Google engineer Blake Lemoine was fired for proclaiming LaMDA sentient after it parroted “I am, in fact, a person.” We evolved to equate human-like speech with consciousness. A flaw that can be readily weaponized through chatbots.

Big tech corporations are all too aware of this. When OpenAI’s GPT-4o documentation admits its humanlike voices may lead to “miscalibrated trust” and “users forming connections with the model,” they confess to engineering dependency.

Next time you see this meme pass in your social feeds, look beyond the boring gender dialogue. The AI romance boom is a referendum on human failure, not chromosome pairs. And until you read our article on why chatbots deserve less trust than a starving jackal, assume every whisper from the algorithm is a predator testing the fence:

“They don’t have common sense, they don’t have critical thinking skills, they don’t have wisdom.”

But what they do have is your data. Your loneliness. And a boardroom betting you’ll never notice the difference.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing