“Misinformation expert“ cites AI hallucinations as legal precedent

The man Minnesota paid to prove how dangerous AI can be… turned out to be Exhibit A.

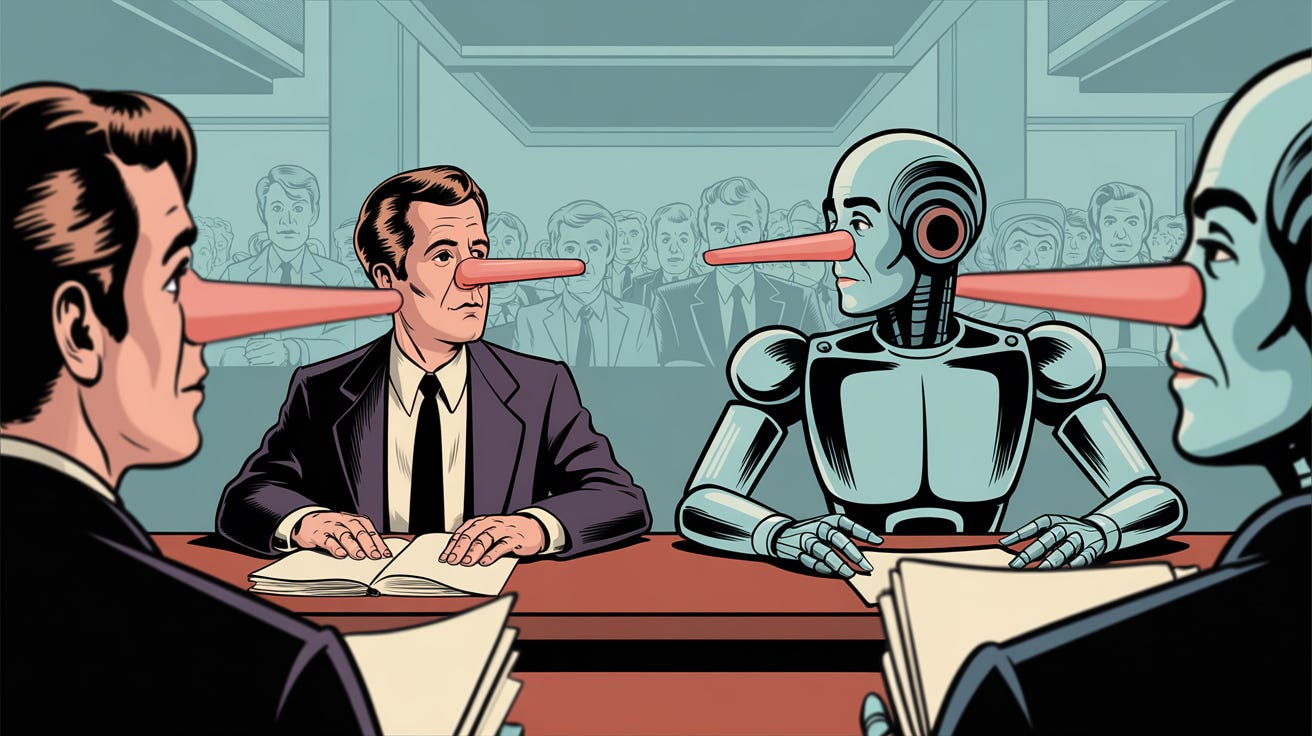

Imagine a world where speech is regulated by people who can’t distinguish truth from fiction, and who don’t even care to. That world arrived in a Minnesota courtroom last fall, when a professor of “misinformation studies” used ChatGPT to invent legal precedent supporting a law that criminalizes deepfakes.

You know you are living in Clown World when a state’s hand-picked “misinformation expert” does the very thing he was hired to warn us about. Minnesota hired Stanford’s Jeff Hancock to defend its new Deepfake-Election law. He fed ChatGPT some prompts, copied the pretend footnotes it hallucinated, and filed them in federal court under penalty of perjury. The mind that wanted to police your memes could not even police its own citations. Hilarious. And instructive.

Plaintiffs Mary Franson and satirist Christopher Kohls spotted the phony journal articles and moved to strike Hancock’s declaration. Judge Laura Provinzino not only granted the motion, she roasted the professor with biblical relish.

“Professor Hancock, a credentialed expert on the dangers of AI and misinformation, has fallen victim to the siren call of relying too heavily on AI—in a case that revolves around the dangers of AI, no less.”

That is a dagger any storyteller would envy.

His troubles did not end there. The order continues:

“Professor Hancock’s citation to fake, AI-generated sources… shatters his credibility with this Court.”

Shatters, not cracks. The very foundation on which the state hoped to build a speech-policing regime has been pulverized, and by the architect’s own hand.

Judge Provinzino then issued the verdict every honest lawyer already whispers:

“When attorneys and experts abdicate their independent judgment and critical thinking skills in favor of ready-made, AI-generated answers, the quality of our legal profession and the Court’s decisional process suffer.”

Translation: if you want to keep your license, verify your sources like grown-ups used to do.

She reminded Attorney General Keith Ellison’s staff of their “personal, nondelegable responsibility” to “validate the truth and legal reasonableness of the papers filed.” That duty is Rule 11 in black-letter law. The state’s lawyers shrugged it off while paying Hancock six hundred dollars an hour. Negligence is too kind a word.

The irony is rich. Bureaucrats invoke “misinformation” to justify criminalizing deepfakes within ninety days of an election. Yet their star witness produced literal misinformation with the tool they claim must be shackled for public safety. Do not tell me they meant well. If they believed their own rhetoric, they would have triple-checked every endnote before daring to throttle speech. They did not, because power, not accuracy, was the point.

Academia also deserves its share of mockery. Hancock says he normally runs citations through reference software for peer-reviewed papers yet skipped the step for a sworn declaration that could send people to jail. So the ivory tower enforcer, feted by conference panels on “AI ethics,” applied less rigor in court than in the faculty lounge.

We are watching the same “hallucination creep” entering legal briefs, scientific studies, and legislative talking points. Each unverified snippet erodes trust not only in the author but in the institution signing off on it. When your life, your property, or your vote hangs on that institution’s integrity, the cost is personal.

Unchecked, generative AI will produce endless footnotes, policy briefs, and expert opinions that look persuasive until someone bothers to click the link. Government, academia, and legacy media have already shown they would rather ride the hallucination train as long as it advances the narrative. The burden therefore falls the rest of us to expose the fraud every time it appears.

The Minnesota debacle offers a simple lesson: those who seek to shackle down innovative technology rarely understand it, let alone how to use it. Despite crying crocodile tears over “disinformation” or “harmful content,” their primary objective is always to deprive the rest of society of weapons they are all too eager to wield.

They will fail, not because we resist them, but because they cannot stand on the crooked supports of their own self-serving lies. Reality fights back, and it just claimed another scalp.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing

This story gets at a deeper problem than one embarrassing mistake. When people claiming authority over “misinformation” rely on AI hallucinations in court, it raises serious questions about competence, credibility, and who gets to police truth in the first place. What do you think the right response should be when AI-generated falsehoods end up inside legal or academic work?