NIST wants comments on “secure AI agents.” Here’s why that should worry you

NIST’s AI agent security RFI and the regulation pipeline hiding in plain sight

If you want to understand how AI regulation actually happens in the United States, ignore the speeches and watch the paperwork. One of the most important regulatory proto-events is not a law at all. It is the standards process.

That is why NIST’s Request for Information regarding security considerations for AI agents, published in the Federal Register, deserves attention. The comment period runs through March 9, 2026.

What the RFI says, in plain English

NIST’s notice asks stakeholders for practices and methodologies to measure and improve secure development and deployment of AI agent systems. The key phrase is “agent systems.” This is not just chatbots. This is software that can take actions in tools, call APIs, orchestrate tasks, and move through multi-step workflows.

In other words, it targets exactly the category of systems that enterprises are racing to deploy because they promise labor leverage.

Why a standards process can become de facto law

The government does not need to pass a sweeping AI agent law to control AI agents.

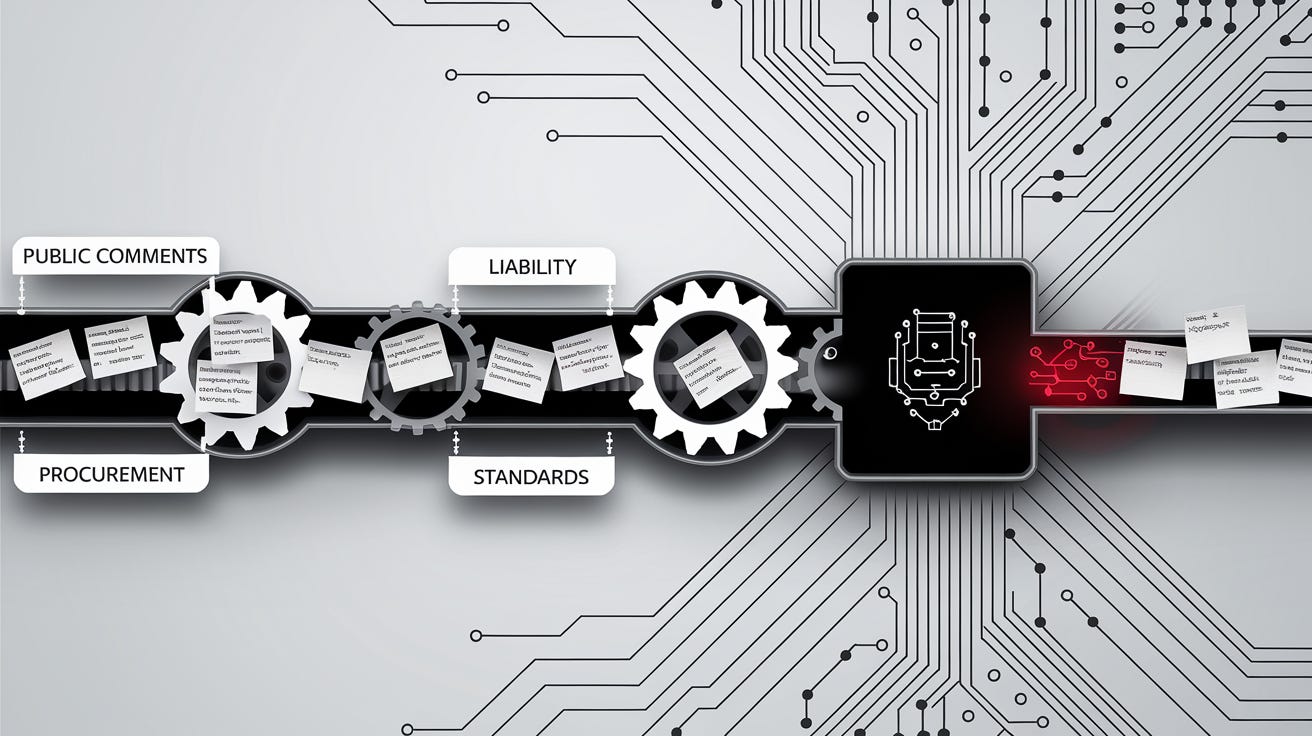

A standard can become “mandatory” through three quiet mechanisms:

Federal procurement

If agencies require conformance for contracts, the standard becomes a market gate.Liability expectations

Once a standard exists, courts and insurers treat it like the baseline for “reasonable care.” Deviate and you are presumed negligent.Regulatory borrowing

State and federal regulators routinely import standards language into rules because it is already written, already debated, and already boring.

That is why a “request for information” matters. It is where the vocabulary is set, and vocabulary decides what can be punished later.

Security... for whom?

Security is real. AI agents can cause real damage. But “security” is also a political solvent that dissolves rights when nobody is watching. So here are some important questions to ask:

Will the resulting guidance privilege large incumbents?

If compliance requires expensive audits, proprietary tooling, or bureaucratic reporting, open-source and small shops get squeezed out.

Will “secure deployment” become an excuse for identity mandates?

NIST’s ecosystem often pairs security with stronger identity verification and access controls. That is fine inside a company. It is dangerous when it becomes a default expectation for citizens using general tools.

Will the standard push centralized logging and retention?

Agent security frameworks can easily slide into “record everything.” That is a privacy nightmare when agents touch personal browsing, local files, and communications.

This is where the anti-establishment instinct is useful. Institutions rarely waste a crisis. If agent mishaps rise, the standards language written now will be the justification later.

Agents are already brittle

The public status pages and user reports are a reminder that agent systems are not magical. They are software. Software fails.

Anthropic’s status page recorded elevated errors on Claude models.

Users are also reporting Codex streaming disconnects.

Those are not “agent jailbreaks” but mundane reliability issues. Yet, mundane issues often produce the most heavy-handed policy responses, because bureaucracies understand outages better than they understand model behavior.

NIST’s AI agent security RFI is a hinge. Today it is voluntary input. Tomorrow it can become a procurement checkbox. After that it can become the legal baseline used to punish anyone outside the approved lane.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing