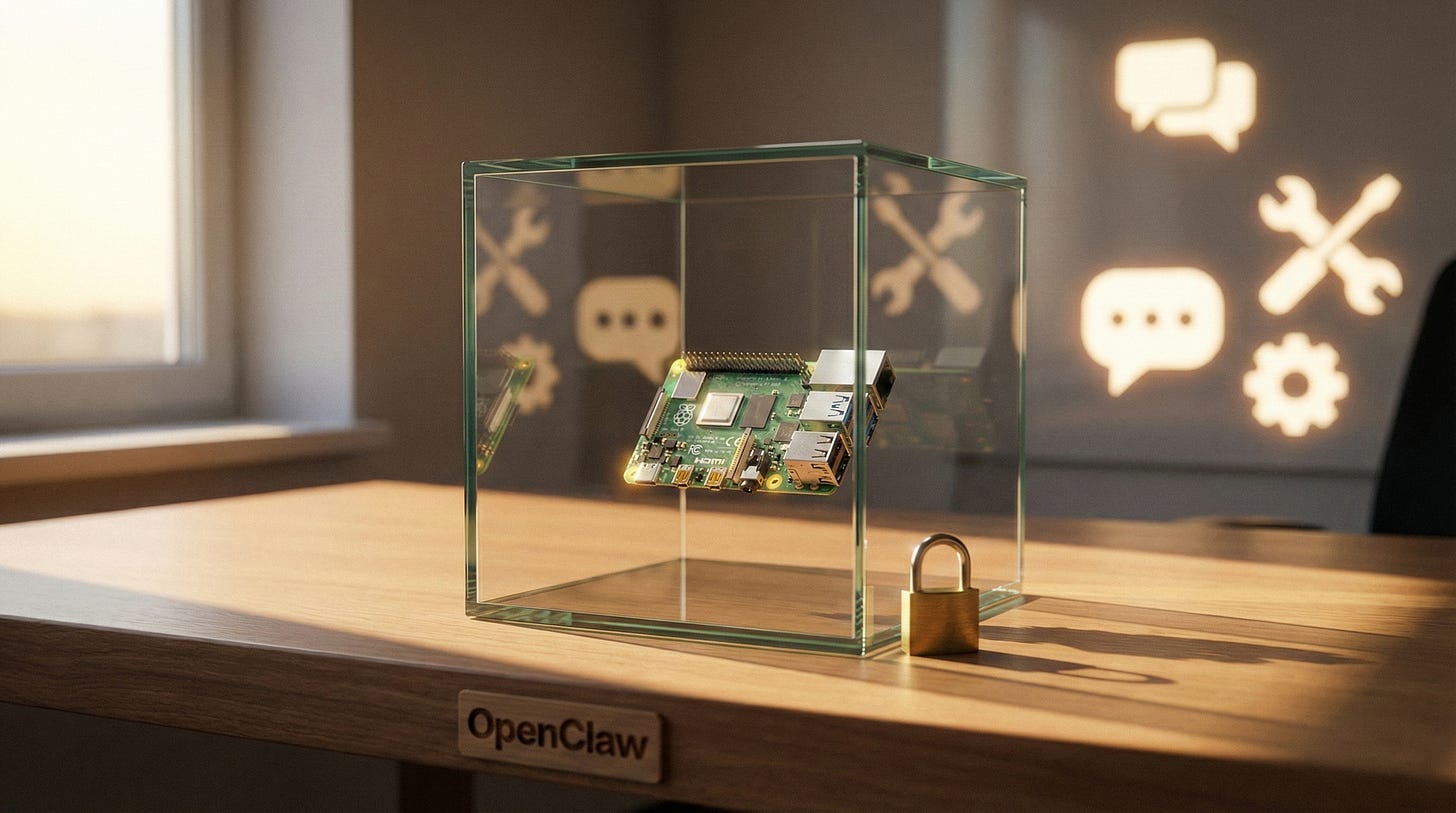

OpenClaw is going viral because it offers power without permission. That’s also the risk

The OpenClaw boom signals a shift to local-first AI agents. Learn the upside for autonomy, the security traps, and the basics of safer self-hosting.

OpenClaw, a rapidly growing open-source “personal AI assistant” you can run yourself, is exploding in popularity while security researchers warn that naïve deployments can leak secrets, expose endpoints, and hand attackers a remote-control panel for your digital life.

That combination is exactly why it matters to liberty-minded readers.

What OpenClaw actually is

OpenClaw bills itself as “a personal AI assistant you run on your own devices,” with integrations across common chat platforms and the ability to act across your tools.

If you want the cleanest starting point for what it is and how it works, start with the official OpenClaw GitHub repository.

The pitch is seductive: bring your own models, keep your assistant close to your data, and talk to it where you already live.

If you have been waiting for a post-app era where your tools feel like they work for you instead of the other way around, this is the direction. A local-first agent is a real step toward autonomy.

Why it is catching fire now

The timing makes sense. The public is tiring of centralized AI that can change rules overnight, price you out, or refuse tasks based on shifting policy. A self-hosted agent promises continuity. It also promises privacy, if you configure it correctly and use local inference.

A recent write-up describes OpenClaw’s meteoric star growth and why the project is capturing attention in the first place, hype included in the framing.

Another commentary piece ties the rise of OpenClaw to a broader wave of “agentic” tools and the weird new ecosystems they enable.

Even if you ignore the hype, the underlying trend is real: agents are shifting from chat toys to systems that can act.

Once software can act, it becomes a power tool.

The hard part: an agent is a privileged software operator

OpenClaw’s whole value proposition is that it can integrate with channels, services, and potentially your machine. That means credentials, webhooks, endpoints, and permissions.

Security people are not “anti-open-source” when they warn about this class of tool. They are doing the obvious math.

Cisco’s AI team has already warned that personal agents like OpenClaw can execute commands, read and write files, and become a prompt-injection target if you grant it high privileges.

Bitsight is even blunter about exposed instances and the risks of integrating an “omnipotent” agent with too many systems.

Kaspersky points to a security audit claiming a large number of vulnerabilities discovered during a prior naming era of the project.

Fortune’s reporting collects the same theme from multiple researchers: the tool is powerful, and it attracts unsafe usage patterns.

Calling this “fear” misses the point. This is what happens when you put an automation layer on top of everything you own.

The liberty tradeoff: decentralization increases responsibility

A centralized AI assistant can be coerced or censored, but it also tends to hide its complexity behind managed infrastructure. You are renting convenience. You are also accepting surveillance risk and policy risk, because the vendor is the choke point.

A local assistant flips the bargain. You get capability without permission. You also inherit the operational discipline that Big Tech normally forces on you, sometimes for its own benefit, sometimes because it has to.

If you do not rotate keys, lock down ports, and treat plugins as untrusted code, you are building your own compromise.

That is the core trade.

How to run OpenClaw without handing over the keys to your kingdom

Start by treating the assistant like a server, not an app.

Read the docs, deployment notes, and security guidance in the OpenClaw GitHub repository. Assume anything it exposes on your network will be scanned. If you use remote access, assume it will be probed and brute-forced.

Then reduce blast radius.

Put it in a container or VM. Use separate API keys with minimal scopes. Avoid linking it to your primary email and cloud admin accounts until you understand the permission graph.

Do not expose dashboards to the public internet. If you need external access, use a proper VPN or a zero-trust tunnel you control, and still assume the endpoint becomes a target.

Treat every integration as a new doorway. Add only what you truly need. Remove what you do not use. The agent’s value comes from its ability to act, so you should be deliberate about what “act” means in your setup.

Most importantly, keep inference local when possible. If the agent is constantly shipping your context to third parties, you are not getting the sovereignty you think you are getting.

What this signals about the next phase of AI

OpenClaw’s rise is a signal that the market wants tools that live with the user, not tools that live inside an account.

Institutions will not like that. The natural reaction from regulators and platform gatekeepers is to reframe local agents as a security threat that “requires standards.”

Some standards will be legitimate. Many will be power moves that push the ecosystem back toward permissioned access, mandatory monitoring, and licensed deployment.

If you want local AI to survive politically, you need users who can run it responsibly and loudly refuse the idea that “safety” requires central control.

The bottom line

OpenClaw is part of the escape hatch. It is also a reminder: freedom tools demand adult supervision. If you can handle that bargain, this category is worth watching closely, and worth learning to run well.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing