Prohibited AI practices for thee…

The AI Act’s prohibition on behavior-distorting algorithms comes with escape hatches wide enough for Leviathan.

Man innovates, the EU regulates. We all know that the eurocrats love to strike a moral pose and the freshly minted EU AI Act is little more than that. Buried in Article 5 is a ringing denunciation of “manipulative or deceptive techniques” in software:

“the placing on the market, the putting into service or the use of an AI system that deploys subliminal techniques beyond a person’s consciousness or purposefully manipulative or deceptive techniques, with the objective, or the effect of materially distorting the behaviour of a person or a group of persons by appreciably impairing their ability to make an informed decision, thereby causing them to take a decision that they would not have otherwise taken…”

That sounds noble, until you recall how Brussels ran its Covid-19 jab campaign. Commission task-forces produced behavioural-science playbooks that sliced the population into psychological tribes, mapped “loss-aversion” triggers and taught doctors how to “build an influential voice on social media about vaccination” in order to corral fence-sitters into the injection line. A Joint Research Centre report candidly describes the goal as “identifying behavioural determinants of vaccine hesitancy” and using them to increase uptake. In other words, the very manipulative persuasion the Act now outlaws for private coders was fine when the message came from the state.

Covid-19 wasn’t the only instance where we saw this sort of manipulation at play. A foresight paper prepared for the Green Deal brags that “Member States actively shape behavioural change of citizens from consumerism to ecological consciousness… and use technologies to maximise their capacity to steer the change.” If an advertiser wrote that sentence the Commission would call it dark-pattern mind control. When the eco-bureaucracy says it, it becomes enlightened governance.

Article 5 also trumpets a ban on a Chinese-style social credit system:

“the placing on the market, the putting into service or the use of AI systems for the evaluation or classification of natural persons… based on their social behaviour… with the social score leading to detrimental or unfavourable treatment…”

But look closer. The verbs are placing on the market and putting into service. Those are actions of private suppliers. Governments are covered only indirectly, and the ban is riddled with loopholes when public authority invokes “law enforcement” or “national security.” Real-time facial recognition in a public square is forbidden, “unless… strictly necessary” to hunt terrorists, suspected criminals or even missing persons. History shows that once an exception exists it grows like bindweed.

Then comes the sleeper clause, point (f):

“the use of AI systems to infer emotions of a natural person in the areas of workplace and education institutions, except where the use… is intended… for medical or safety reasons.”

Pandemics, climate, terrorism, war, … pick any “emergency”, whether imaginary or real, and bureaucrats will claim a medical or safety angle. The same people who insisted that showing a health pass to sip espresso was “for your safety” now hold a veto card that neutralizes their own prohibitions.

And how would this even work? How, exactly, will governments be able to utilize vast databases with the biometric/medical data of millions of citizens “only in case of emergency,” if they don’t spend plenty of time and resources collecting and storing this data when there is no sign of such emergency?

Industry understands the game. Private builders must fill out risk logs, bias audits and “fundamental-rights impact assessments.” Violate a paperwork rule and you face fines up to seven percent of worldwide turnover. Meanwhile, officials who decide that emotion-tracking cameras are needed to enforce the next lockdown will glide through, no problem.

The Act’s social-credit language is also vague by design. It forbids scoring that leads to “unjustified or disproportionate” harm. Who decides what is justified and proportionate? The same regulators who push carbon credit passports and mileage caps. When the Commission funds a pilot that rewards “eco-friendly behaviour” no one in Brussels will see injustice. When a start-up tries a loyalty scheme that nudges shoppers toward sugary drinks the hammer will fall.

This has been the Brussels formula for a generation: regulate society, exempt themselves, export the paperwork to every corner of the globe and call it “values-based leadership.”

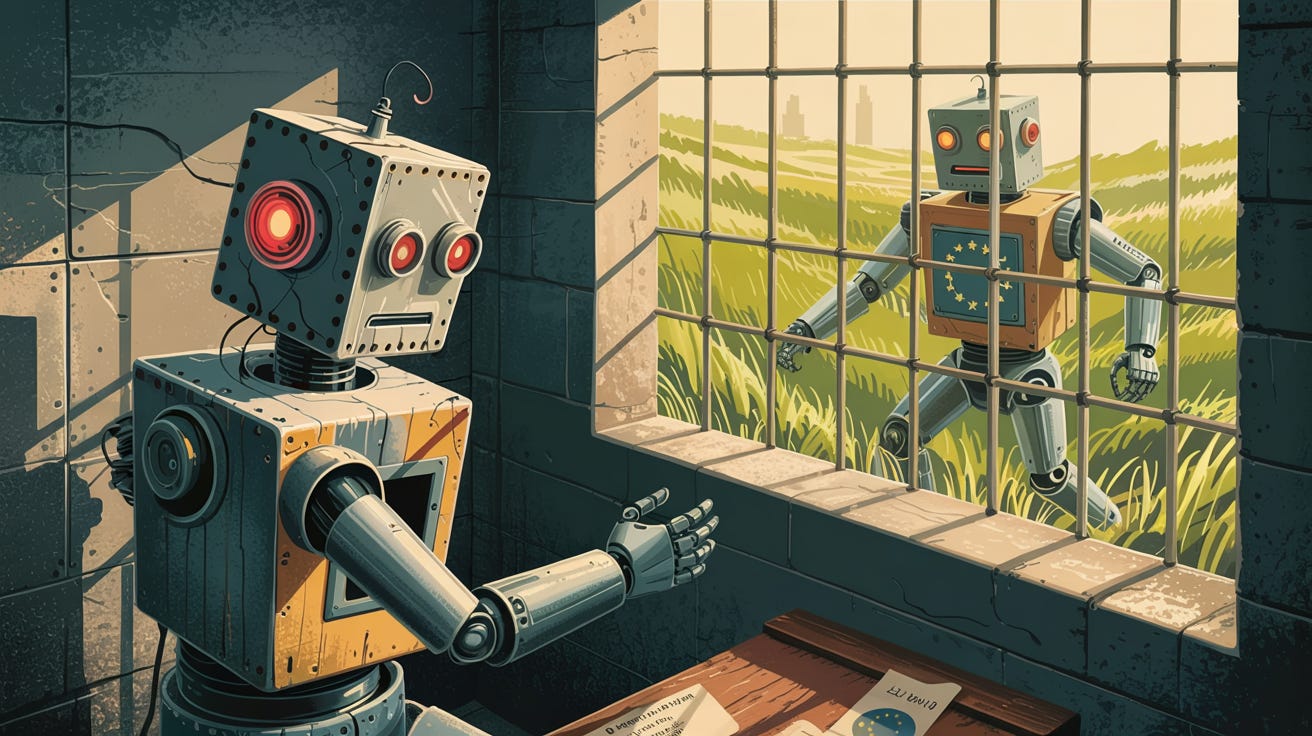

The lesson is straightforward. The finest words against manipulation, surveillance and social control are meaningless when the authors reserve for themselves the right to endlessly engage in them. The EU has written a rulebook that throttles private builders and grants public masters a toolbox they alone may use.

Discerning citizens should see the pattern for what it is: yet another regulation that shackles innovation while giving government an open runway to the very dystopian panopticon it claims to be trying to prevent.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing