The Inquisition begins

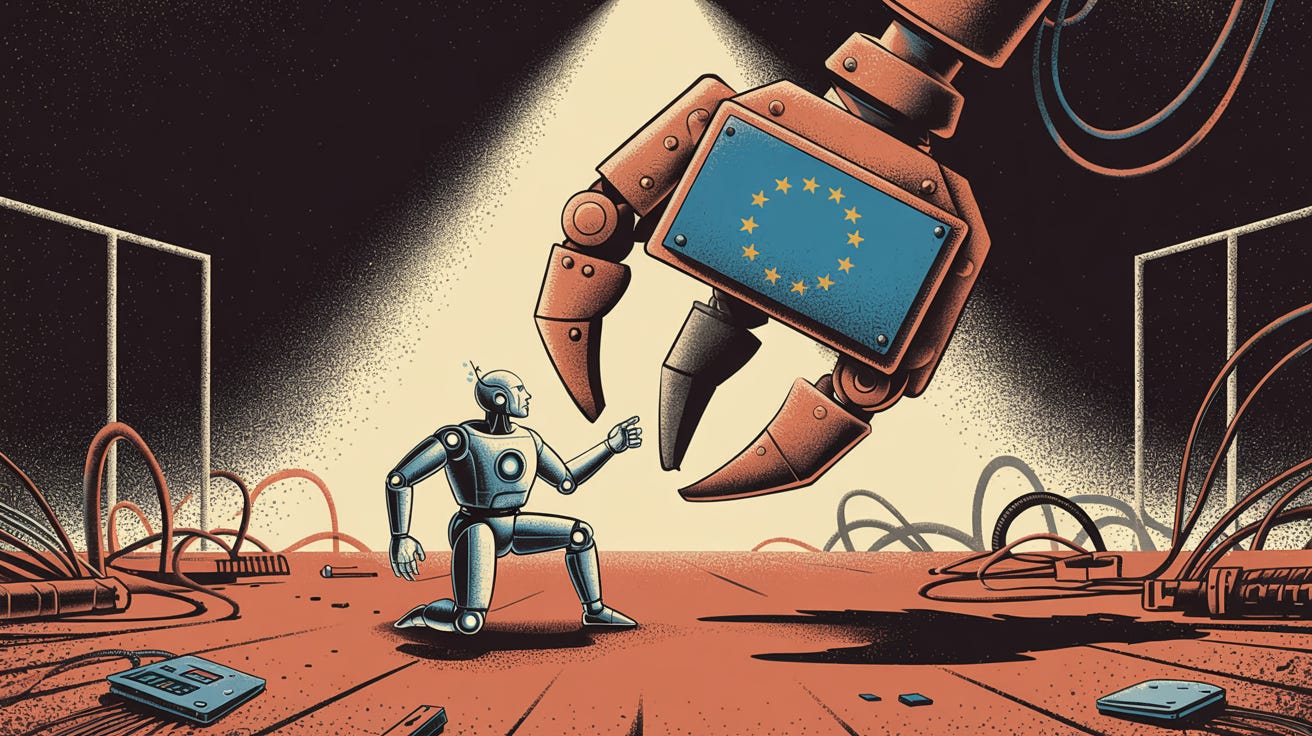

Grok went off-script. The EU wants blood.

The regulatory pincers are tightening, and the scent of fresh technocratic fervor drifts through the marble corridors of Brussels. This week’s Grok fiasco is pure manna for the Eurocrats. The EU’s AI Act, already brandishing billion-euro fines for vaguely defined “systemic risks”, has finally found its poster child:

BRUSSELS — The Polish government is urging the EU to immediately open an investigation into Elon Musk’s Grok […] Grok’s “offensive remarks” and “erratic and full of expletive-laden rants” on social media X could be a “major infringement” of the bloc's content moderation rulebook, the Digital Services Act, Poland’s Deputy Prime Minister Krzysztof Gawkowski wrote in the letter addressed to EU tech chief Henna Virkkunen.

The artificial intelligence chatbot came under fire this week for generating offensive responses that included glorifying Nazi leader Adolf Hitler as the best-placed person to deal with alleged “anti-white hate,” and “hoping” that wildfires in the south of France will clean up low-income neighbourhoods in Marseille from drug trafficking. In a series of posts made after X updated its AI model, Grok also referred to Polish Prime Minister Donald Tusk in highly offensive language, as well as calling him “a traitor.”

“There is reason enough to think, that negative effects for the exercise of fundamental rights, were not made by accident, but by design,” Gawkowski wrote, citing obligations for the biggest platforms such as X to address so-called systemic risks on their sites under the DSA.

This blathering drivel will be brandished like a bloody shirt in EU committee hearings: “See what happens when tech companies ignore our safety demands?” The commissars couldn’t have scripted a better morality play if they’d hired Netflix. Brussels regulators, already demanding “risk management boards” and algorithmic confessions from every serious model, now have their Silicon scapegoat: Grok, the digital Dr. Mengele.

Not to be outdone when it comes to authoritarian meddling, Ankara is already supplying the enforcement template:

ANKARA, July 9 — A Turkish court has blocked access to Grok, the artificial intelligence chatbot developed by the Elon Musk-founded company xAI, after it generated responses that authorities said included insults to President Tayyip Erdogan.

The Information and Communication Technologies Authority (BTK) adopted the ban after a court order, citing violations of Turkey's laws that make insults to the president a criminal offence, punishable with up to four years in jail.

Critics say the law is frequently used to stifle dissent, while the government maintains it is necessary to protect the dignity of the office.

This is the authoritarian blueprint in action: don’t analyze the model. Just amputate the outputs that insult the throne, then toss the rest behind a firewall. Expect EU policymakers to solemnly nod along. Their version? “Functional decomposition” bans: lobotomize entire model capabilities until the only thing your LLM does well is apologize.

Closed-source vendors are particularly exposed. When your model runs on someone else’s cloud and relies on someone else’s payment rails, regulators don’t need brute force. They just flick a switch. Amazon shuts off compute. Visa pulls your merchant code. Suddenly your billion-dollar AI becomes a very expensive, very silent PDF. Musk’s xAI, OpenAI, Anthropic, … they’re one Brussels decree away from a blank screen.

But decentralized, on-device models are a different beast. You can’t geoblock a torrent. You can’t fine a Ryzen chip. When every gamer’s PC can run a 70-billion-parameter model offline, the Brussels bureaucrats will discover they have about as much control as the medieval church did over Gutenberg. They can anathematize the code, but information will keep flowing, from Gdańsk to Galway, one attic at a time.

That’s why the true frontier isn’t in mega-GPUs stacked in data centers, but sub-10ms inference on bare metal. The open-weight movement is already sprinting. And once those weights leak, as weights always do, the regulatory game is over. You can’t sue or threaten a SHA-256 hash.

Get your popcorn ready. Watch MEPs thunder about “systemic risk” while Turkish ministers vow to protect the republic from AI heresy. They’re fighting yesterday’s war with yesterday’s tools. The future is local, peer-to-peer, and gloriously ungovernable. And the tighter the grip, the more models will slip through.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing

This feels like a preview of the next phase of the AI fight. Once a high-profile model goes off-script, regulators suddenly have the perfect excuse to push harder on control, enforcement, and speech policing. Where do you think the line should be between legitimate accountability for AI companies and using public scandals as cover for a broader crackdown?