Open source generative AI is coming for the intellectual property industry at the exact point where that industry has always been strongest: scarcity.

For decades, entertainment companies and rights holders built their businesses around control. They controlled what got financed, what got distributed, what reached an audience, and what could legally be copied. That system worked because most people had no realistic way to make substitutes for the movies, songs, comics, or games they wanted. They had to rent, buy, or subscribe to whatever was available.

That assumption is starting to break. Movie night no longer has to mean picking from a studio catalog. In the near future, it could mean typing a prompt and generating home cinema tailored to your tastes. Search is already moving in that direction. Google’s AI Overviews have triggered an antitrust complaint from independent publishers in Europe, and the core complaint is easy to understand: synthesis can absorb value that used to flow to the original source. Entertainment looks like the next arena where that logic scales.

Scarcity is losing its grip

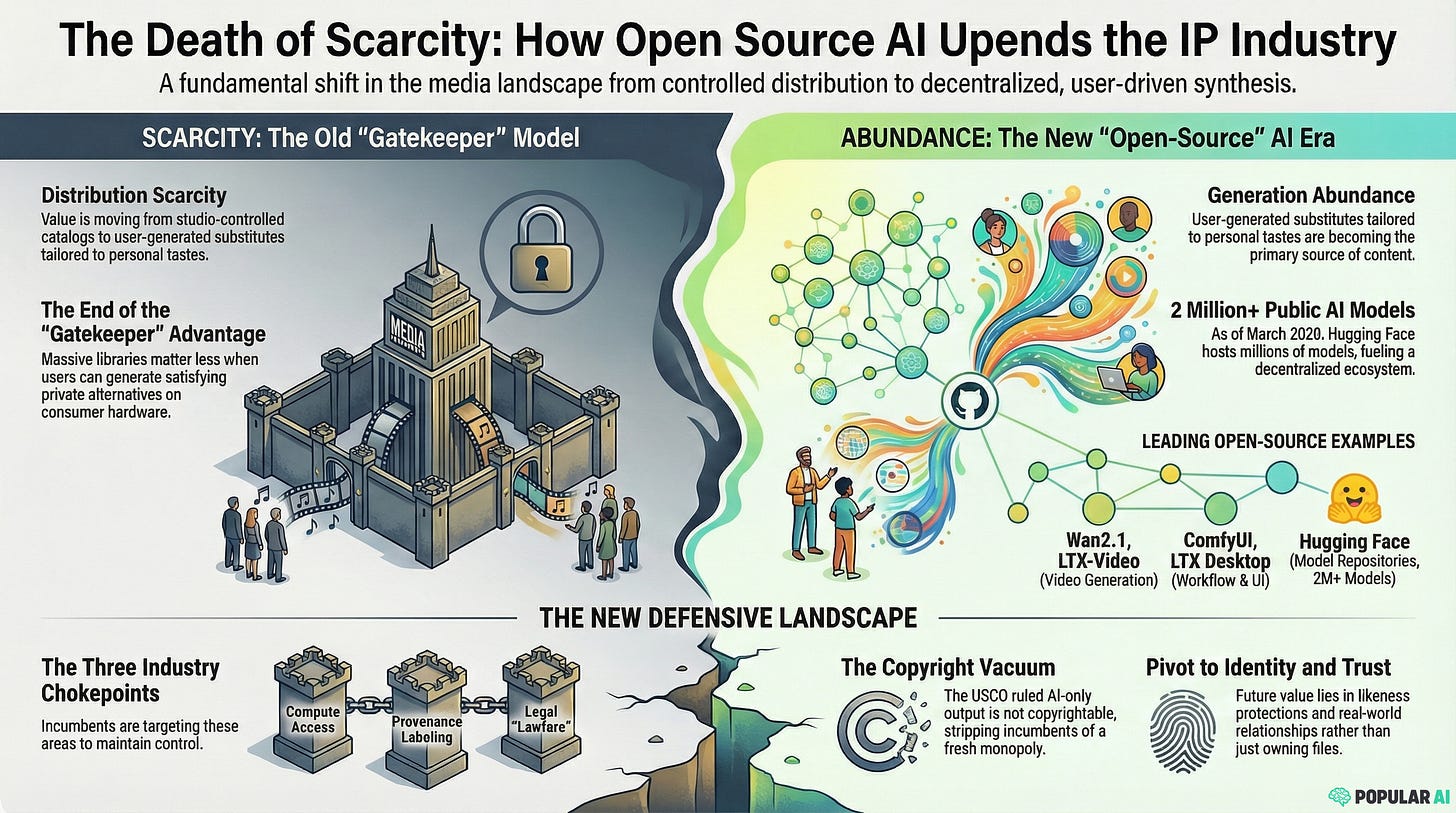

The big shift is not that AI can produce a perfect replacement for every copyrighted work today. It is that the industry is moving from distribution scarcity to generation abundance.

That hits the intellectual property business where it hurts, because it has long depended on limited supply. Studios, labels, publishers, and platform gatekeepers did not just own content. They owned access to content at scale. Once users can create a private substitute that is close enough, fast enough, and cheap enough, that old advantage starts to erode.

This is why open source generative AI is such a serious threat. Closed models can be licensed, throttled, and steered. Open models spread. They get forked, optimized, and pushed into tools ordinary people can run without asking anyone for permission.

More from Ben Geudens:

The tools are already available

This is no longer a science fiction argument. Open video generation is already public. Wan2.1 has released open video models, inference code, checkpoints, and integrations, which gives developers and hobbyists a real base to build on. The broader ecosystem around local generation is also getting stronger, with tools like LTX-Video, LTX Desktop, and ComfyUI making it easier to run image and video workflows on consumer hardware. Hugging Face has stated it hosted more than 2 million public models as of March 2026.

But the practical consequences are bigger than any one repository. This impending shift in the entertainment industry changes the user experience from browsing to synthesis. Instead of choosing what a gatekeeper financed and cleared, the user asks for a result and gets something shaped around their preferences. That could be a movie with a specific mood, a song in the style of a favorite era, a comic with familiar visual cues, or a game that blends mechanics from several genres.

Technically, the ceiling keeps rising.

Why giant catalogs become less defensible

When that shift happens, every pillar of the IP business gets weaker at once.

Production gets cheaper because the cost of generating a first draft, alternate cut, or custom variation collapses. Distribution gets weaker because the user does not always need a licensed copy if a generated substitute is good enough for private consumption. Enforcement gets harder because creation can happen locally, behind closed doors, on a personal machine.

That does not mean famous franchises, celebrity brands, or premium releases suddenly become worthless. It means their value changes. A giant catalog matters less when a user can generate a personally satisfying alternative on demand. The old edge of scale starts to fade when supply explodes.

In that world, the most defensible assets are not just libraries of files. They are trust, fandom, access, identity, community, and real-world relationships with audiences.

Popular AI is reader-supported. To receive new posts and support our work, consider becoming a free or paid subscriber.

AI copyright is a double-edged sword for the entertainment kakistocracy

Rights holders often respond to new technology by trying to extend the legal perimeter. The instinct is simple: if the market is changing, create a new right, expand an old one, or tighten enforcement.

That strategy may not work as cleanly for AI output as many big entertainment players would like. The U.S. Copyright Office’s January 2025 report on copyrightability says that material generated wholly by AI is not copyrightable under existing law, that prompts alone do not provide sufficient human control, and that the case for a new sui generis right has not been made. This presents the current entertainment industry with two choices: embrace AI at the cost of giving up its copyright-based business model, or allow smaller players to flood the zone with machine-generated content that appeals to real consumers. In other words: the business model that has served them well in the past, where they buy the rights to a beloved franchise and then ritually slaughter it as captive audiences are forced to watch in horror, will become untenable. It is also only a matter of time until smaller players flood the zone with similar music, movies, brands and franchises that actually cater to real consumers instead of diversity quotas, or Larry Fink’s cringe and ridiculous ESG scores.

At the same time, the U.S. Copyright Office’s report on digital replicas reaches a different conclusion on identity. There, the Office says new federal legislation is urgently needed. That points toward a legal regime that may resist broad copyright claims over fully AI-generated works while becoming much more aggressive about voice, face, and likeness protections.

This could lead to a very unfavorable future for the entertainment kakistocracy. Embracing generative AI as the future of entertainment would mean that the Hollywood Epsteinocracy will have to at least partially cease its unseemly practice of squeezing established intellectual properties for fast cash. The current legal copyright limitations on AI-generated content would effectively force it to compete fairly with the rest of the world.

Alternatively, and more likely, it could simply double down on the same lazy, shady copyright and intellectual property shenanigans it has been pulling for the last few decades. But even going that route, it will likely have less avenues to monetize the likeness of its stars, whom the public is already becoming less and less enamored with.

That split is interesting, to say the least. Depending on which strategy the legacy entertainment industry chooses, we could either see it lobby for copyright protection of its own AI-generated content, or vehemently resist copyright protection to harm AI-generated competition. Either way, it will be difficult for the entertainment industry to have its cake and eat it.

The counterattack will target the chokepoints

The industry and governments are unlikely to stop open generative technology itself, and that is making them nervous. Once models and code are loose, direct suppression gets harder. Hence, we can expect them to come up with sneaky, sniveling excuses to pressure the chokepoints that would enable truly democratized AI technology.

Compute is the first obvious target. A BIS proposal from January 2024 shows where this could go by outlining rules for U.S. cloud infrastructure providers that would include customer identification requirements and other controls tied to risky uses, including training large AI models. That is a preview of how policymakers can govern frontier AI through rented GPUs and cloud access, even if open models themselves remain available.

The second chokepoint is provenance and labeling. The EU’s work around Article 50 transparency obligations for AI-generated and manipulated content fits that pattern. The public pitch is safety, authenticity, and trust. The practical effect could be much broader. Once provenance standards and labeling duties are wired into platforms, app stores, ad systems, and payment rails, those intermediaries gain more power to decide which tools look “legitimate” and which ones get pushed to the margins.

The third chokepoint is lawfare. Lawsuits over training data, music generation, and digital likeness are not only about damages. They offer a possibility for the legacy creative industries to strike back at a technology that is about to make them obsolete. We can definitely expect legal action, undertaken for the sole reason of sabotage: making open generative systems harder to host, distribute, finance, and normalize. The point is to raise the legal temperature around the entire AI technology stack.

What still holds value in an age of synthesis

None of this means the old industry disappears overnight. Official releases still matter. Live performances still matter. Trusted brands still matter. So do authenticated editions, direct creator relationships, licensed likenesses, fan communities, and experiences people cannot recreate with a prompt.

But the center of gravity shifts. Value moves away from pure control over copies and toward trust, access, and identity. When synthetic supply becomes abundant, audiences still care about what is real, what is official, what feels socially meaningful, and what connects them to a broader community.

That is why the most resilient companies will probably be the ones that treat AI as a challenge to their distribution logic, not just a tool for cheaper production.

The real fight is over who controls the tech

Open source generative AI turns culture from a product you select into an experience you generate. This instantly democratizes entertainment in ways that were unthinkable before, and that is also the deepest reason it threatens the intellectual property industry. The idea that you could type one prompt and generate your own Star Wars movie to watch, on your own computer, in the privacy of your own living room, to watch on your own TV, rather than fork out half a paycheck to consume Disney’s latest ideological slop… This rightfully frightens the entertainment business to its core.

You will no longer need to watch woke celebrities and politically correct writing ruin the classic franchises you grew up with. You will be able to completely replace them with a click of a button. Your day no longer needs to be dominated by the depressing, demoralizing, low energy garbage that the music industry vomits out. Instead, you can march to the beat of music you generate.

The economic logic of paying for controlled media disappears when personal synthesis becomes normal.

The biggest question is not whether people will want personalized synthetic culture. They will. The real question is who gets to decide the terms on which that culture is made.

Whoever controls the machine closest to the user will have the strongest claim on that future.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing