5 budget GPUs that make local AI image generation feel fast

The best cheap GPUs for Stable Diffusion, SDXL, and FLUX workflows, with clear picks for used deals, new cards, and stretch-budget buys.

Running image generation locally still makes sense in 2026 for the same reasons it always has. It cuts recurring cloud costs, keeps personal files and prompts off someone else’s server, and gives you more control over how and where your tools run. The real buying question is not which GPU tops a gaming chart. It is which GPU is cheap enough to justify and still has enough memory to make daily work in ComfyUI, AUTOMATIC1111, Forge, or similar tools feel smooth instead of fragile. ComfyUI’s current hardware guidance still makes NVIDIA the easiest mainstream route for most people, lists Intel Arc support through native torch.xpu, and describes current AMD RDNA 3, 3.5, and 4 support on Windows and Linux as experimental. AUTOMATIC1111 still notes support for 4GB cards and reports of training on 6GB or 8GB GPUs, while the current Hugging Face FLUX documentation makes it clear that newer workflows often need offloading, quantization, or both to stay practical on consumer hardware.

That pushes this ranking in a very specific direction. For local image generation, 12GB is the floor where things start to feel comfortable, especially once SDXL, ControlNet, and more complex graphs enter the picture. Sixteen gigabytes is the real comfort tier if you want room to grow. Tom’s Hardware’s Stable Diffusion testing points to the same conclusion, with several 8GB cards running into obvious memory limits in heavier scenarios. Because exact board partners and stock change constantly, the buy links below use Amazon search pages instead of frozen listings.

More on budget GPUs for local AI:

Disclosure: This post includes Amazon affiliate links. If you buy through them, Popular AI may earn a small commission at no extra cost to you.

1) Best overall cheap pick: NVIDIA GeForce RTX 3060 12GB

The GeForce RTX 3060 is still the budget card that solves the right problem. NVIDIA’s official specs list the 3060 with 12GB of GDDR6 on a 192-bit bus, and that matters a lot more for local image generation than gaming prestige in 2026. It is old enough to be affordable, it still gets the benefit of NVIDIA’s broad CUDA support, and it avoids the setup friction that pushes many first-time local AI users into the weeds. On March 25, 2026, the RTX 3060 EU price tracker showed used pricing around €264.44 while new Amazon stock sat dramatically higher, which tells you exactly where the value lives.

In practical use, the 3060 12GB is still a very workable card for SD 1.5, straightforward SDXL jobs, inpainting, upscaling, thumbnails, mockups, product images, YouTube art, and lighter ControlNet graphs. It is also an easy recommendation for people who want a card that simply works in mainstream local workflows without a week of tuning. Community comparison tables at Prompting Pixels are useful for seeing where 3060-class cards still sit in the broader local image generation stack.

The catch is simple. This is a used-market recommendation, not a “pay old-stock collector pricing” recommendation. When the price is right, the 3060 remains the best true budget entry point for people who care more about usable VRAM than bragging rights. For live listings, search Amazon for RTX 3060 12GB.

2) Best stretch-budget buy: NVIDIA GeForce RTX 4060 Ti 16GB

The GeForce RTX 4060 Ti is a mediocre conversation starter in gaming circles and a much better local AI card than that reputation suggests. NVIDIA’s own specs list the 4060 Ti with either 8GB or 16GB of GDDR6, and the 16GB version is the only one that really matters for this discussion. That extra memory gives you more breathing room for SDXL, higher resolutions, larger batch sizes, and heavier node chains that start to feel cramped on 12GB cards.

It also has a real speed case. In Puget Systems’ Stable Diffusion testing, the RTX 4060 Ti was nearly 43% faster than the 3060 Ti in image generation. That does not automatically make it the best value at every price, but it explains why the card feels comfortably modern in day-to-day local AI work. This is the card for readers who want local generation to feel relaxed instead of barely acceptable.

Price discipline still decides whether this one is smart. Used listings can start around the mid-$400s, and the 4060 Ti 16GB only makes sense when it is discounted enough to justify the jump over a 3060 or 4070-class alternative. Before buying, compare current RTX 4060 Ti listings on eBay with Amazon search results for the RTX 4060 Ti 16GB.

3) Best speed-per-watt value: NVIDIA GeForce RTX 4070 12GB

The GeForce RTX 4070 is the pick for people who care about throughput, responsiveness, and power efficiency more than maximum VRAM. NVIDIA lists the RTX 4070 with 5,888 CUDA cores and a 12GB configuration on a 192-bit interface, and that combination still feels quick in real-world image generation. On Prompting Pixels’ GPU benchmark table, the RTX 4070 posts an average of 16.5 iterations per second, which lines up with why it feels snappy when you are iterating through prompts, inpainting, or testing variations in ComfyUI.

This is a strong fit for SDXL-heavy work, creator workflows where time matters, and anyone producing a steady flow of concept art, marketing images, blog graphics, or game assets. If the 3060 is the value play and the 4060 Ti 16GB is the comfort play, the 4070 is the efficiency play. It gives you a more modern feel without stepping into truly expensive territory. (Prompting Pixels)

The tradeoff is the same one it has always had. Twelve gigabytes is enough for a lot of work, but it is still a ceiling. That matters once your graphs get ambitious or your attention shifts toward newer FLUX-style workloads. Even so, a March 25, 2026 EU market snapshot put the RTX 4070 at about €637 new and roughly €510 used, which is why used 4070 cards remain such an attractive step up when you want faster daily performance without the power draw of an older brute-force card. For current inventory, search Amazon for RTX 4070 12GB.

4) Best cheap new-card wildcard: Intel Arc B580 12GB

If you want to buy new and keep costs close to entry-level money, the Intel Arc B580 deserves real attention. Intel’s published specs list 12GB of GDDR6, a 192-bit memory interface, 456 GB/s of memory bandwidth, and 190W total board power. ComfyUI’s current manual installation guidance also lists Intel Arc with native torch.xpu support, which makes this one of the few non-NVIDIA budget cards that feels realistic for local image generation in 2026.

The maturity gap is still real. NVIDIA remains easier, CUDA support is broader, and community troubleshooting is better. But value matters too. On March 25, 2026, the Arc B580 price tracker showed the card around $299 on Amazon, around $300 used on eBay, and a $249 launch MSRP. That makes it one of the few genuinely interesting new-budget options for local image generation if you do not want secondhand hardware.

The B580 is the right buy for a tinkerer who wants a new card, enough VRAM to avoid immediate regret, and a lower up-front cost than NVIDIA’s better-known options. For live listings, search Amazon for Intel Arc B580.

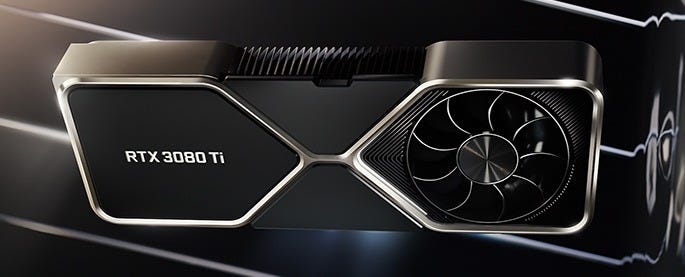

5) Best used brute-force deal: NVIDIA GeForce RTX 3080 Ti 12GB

The RTX 3080 Ti is the old bruiser in this group. MSI’s published specs for a representative 3080 Ti board show 10,240 CUDA cores, 12GB of GDDR6X, a 384-bit bus, 350W power draw, and a 750W recommended PSU. Those are still useful numbers for local image generation because bandwidth and raw compute matter once your workflows get heavier and your patience gets shorter.

As usual, the value only exists in the used market. On March 25, 2026, the RTX 3080 Ti EU price tracker showed used pricing around €449.63 while new Amazon stock was up near €1131. That makes this a used-only recommendation for buyers with a real PSU, decent airflow, and no illusions about heat or power draw.

This is the card for readers who want far more speed than a 3060 without paying modern flagship money. The downside is exactly what the specs suggest. It is hotter, louder, and less elegant than the more efficient cards above it. For current board-partner inventory, search Amazon for RTX 3080 Ti 12GB.

Why these five made the cut

A lot of 8GB cards missed the list because 8GB is where local image generation starts to feel cramped fast. Tom’s Hardware’s benchmark roundup showed several 8GB AMD cards failing to render at higher target outputs, which is exactly the kind of bottleneck that makes a “cheap” GPU feel expensive once you actually try to use it. On top of that, ComfyUI’s system requirements still position AMD’s current RDNA 3, 3.5, and 4 support on Windows and Linux as experimental, while NVIDIA remains the lower-friction route for most mainstream users. That does not make AMD useless. It simply means this ranking favors cards that are more likely to work cleanly for ordinary readers.

What I would buy at each budget

Below about $300, I would still start with a used RTX 3060 12GB. It remains the cleanest budget answer because 12GB of VRAM still matters more than a prettier launch year. If you refuse used hardware, the Intel Arc B580 is the most credible new-card alternative in this price zone.

Around $450 to $550, the choice depends on what annoys you more. Buy the RTX 4060 Ti 16GB if you want extra headroom and a calmer long-term experience in SDXL and heavier graphs. Buy a used RTX 4070 if you care more about speed, efficiency, and day-to-day responsiveness. Buy a used RTX 3080 Ti only if your case, PSU, and tolerance for heat are ready for it.

If your real goal is FLUX, the answer is still brutal and simple. Buy as much VRAM as you can reasonably afford, and expect to lean on quantization or offloading. Hugging Face’s current FLUX documentation says the model family can require roughly 50GB of RAM or VRAM to load all modeling components before optimizations reduce the footprint, which tells you how far newer workflows have moved from old SD 1.5 assumptions.

Bottom line

For cheap local image generation in 2026, the winning strategy has not changed. Buy VRAM first. Buy software support second. Buy gaming prestige last.

That is why the RTX 3060 12GB remains the best true budget pick, the RTX 4060 Ti 16GB is the best stretch-budget buy, the RTX 4070 is the best efficient step up, the Intel Arc B580 is the best cheap new wildcard, and the RTX 3080 Ti is the best brute-force used deal. For broader context, ComfyUI’s hardware notes, AUTOMATIC1111’s project page, Tom’s Hardware’s benchmark roundup, and the latest FLUX documentation from Hugging Face all point in the same direction. Twelve gigabytes is the floor. Sixteen gigabytes is the comfort tier. Friction-free software support still matters as much as raw silicon.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | Popular AI podcast

Looking for the best budget GPUs for local image AI in 2026? We broke down 5 of the strongest value picks for Stable Diffusion, Flux, LoRA training, and other local image generation workflows, with a focus on real performance per dollar. If you are building a local AI setup without overspending, which GPU are you using right now, or which one are you considering next?