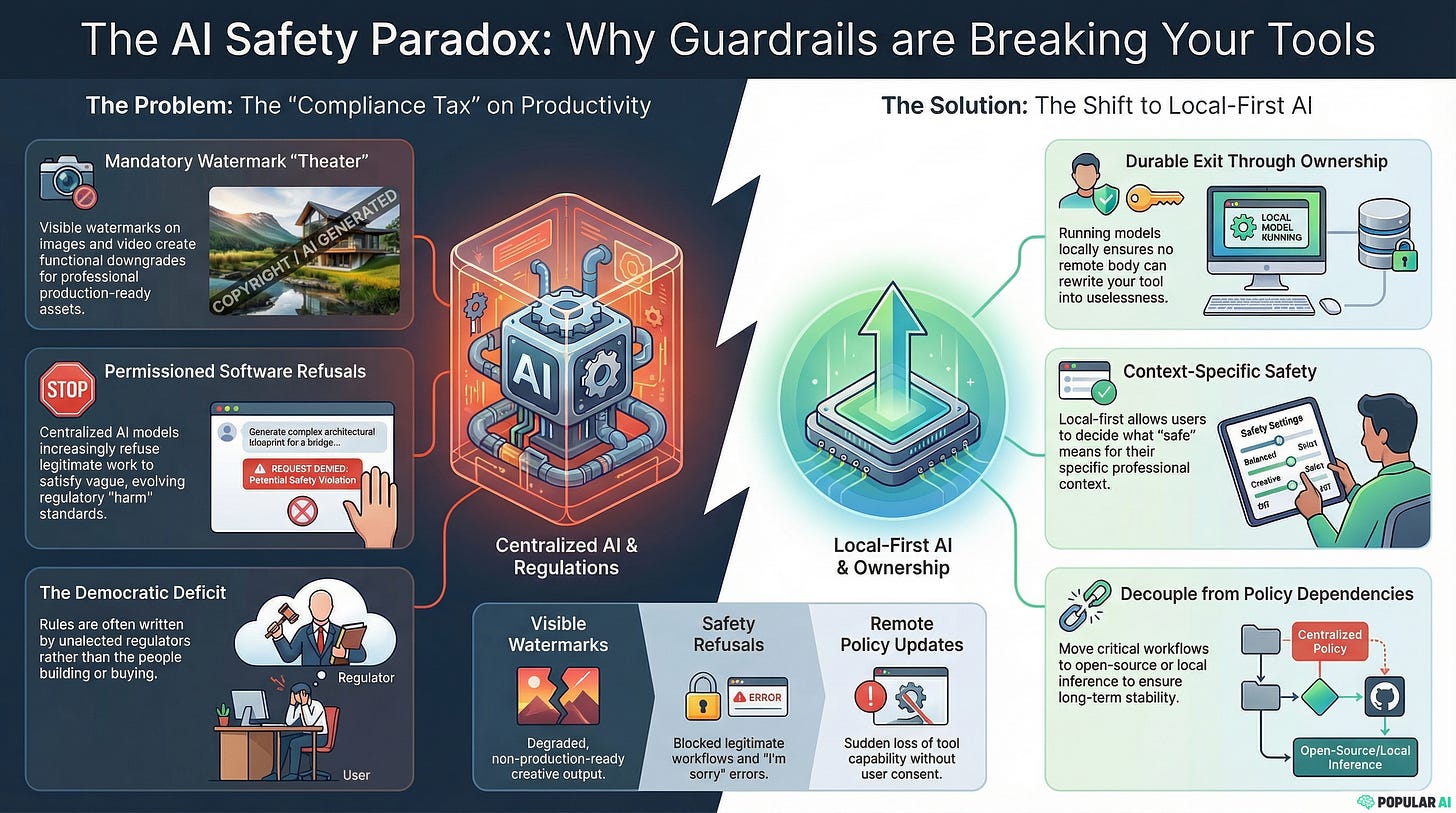

When “AI safety” makes the product useless

Tell me if this sounds familiar: you spend minutes masterfully crafting your prompt, only for the AI to spit back an “I’m sorry, I can’t help you with that.” Well, you’re not alone.

Commercial AI is marketed like an easy button. Pay the subscription, tap world class capability, ship faster.

A growing slice of “premium” centralized AI now arrives with a quiet condition. You can use it, as long as you do not use it too effectively, too freely, or in ways that regulators might find socially inconvenient.

That is the core failure mode of black box AI. In the cloud it is centralized, and it is permissioned. The permission layer is increasingly written by people who do not buy the product, do not build the product, and do not pay the cost when the product becomes cosmetically “safe” and practically worthless.

The democratic deficit behind the guardrails

Start with the power structure that shapes what vendors will ship.

In the EU, the European Commission has major agenda setting and regulatory influence, but it is not elected by European voters. This is far from “the public can hire and fire the people who write the rules,” as the Commission itself explains in its overview of how the Commission is appointed.

In the UK, the direction is different, but the feeling is familiar. A steadily expanding compliance regime around online speech and “harm” pushes enforcement power into regulators and codes of practice. The Online Safety Act framework gives Ofcom a central role in risk assessments and duties, as described in the government’s Online Safety Act explainer.

Whether you call it a democratic deficit or administrative state creep, the operational result is the same. Rules emerge that reflect institutional incentives more than public common sense. Then vendors pre-comply, because the easiest way to avoid political heat is to over-constrain the product.

AI is simply the newest victim.

Black box AI becomes permissioned software

A black box model is more than an algorithm. In a centralized setup, it becomes a service with an evolving rulebook.

That rulebook includes refusals, throttles, content filters, and increasingly, visible “provenance” features that change what the tool is good for. Constraints exist in every product. What matters is who decides them, and how quickly they can change them for everyone.

If you are building a business workflow on top of a cloud model, your dependency is not just on compute. Your dependency is on policy.

Watermark theater and the compliance tax you can see

The easiest compliance feature to notice is the one stamped on the output.

Google’s story starts with invisible watermarking. Its SynthID system is presented as imperceptible to people, detectable with the right tools, and designed to survive common edits.

Then the visible layer arrives, and the utility trade becomes obvious. A big watermark across a generated video is not a subtle provenance signal. It is a constraint on professional use.

This is where “provenance” stops being about verification and starts being about making the product safe for politics. The Verge has followed Google’s broader push for detection tools around SynthID and provenance, including coverage of a SynthID detector for AI generated content.

OpenAI has leaned into a similar approach with Sora. In its launch write up, OpenAI says Sora outputs carry both visible and invisible provenance signals, and that at launch all outputs carry a visible watermark alongside C2PA metadata.

The Verge has also explored what this means for detection and enforcement in practice in a piece on how broken deepfake detection can look around Sora.

Vendors could have focused on giving users optional verification tools. Instead, many rollouts lean on output stamping that makes the result harder to use in the exact contexts where paying customers need clean assets.

If you are paying for a high end image editor or video generator because you want production ready creative, a visible watermark is a functional downgrade.

Why watermarking does not stop real disinformation

Mandatory watermarking is usually justified as a defense against disinformation and scams. The problem is that watermarking targets the wrong adversary.

Serious disinformation operations do not need your consumer cloud plan. They can afford local pipelines, on-prem systems, or bespoke tooling. Meanwhile, watermarking mainly burdens ordinary users and small businesses who chose commercial AI precisely to avoid building infrastructure.

Even on its own terms, provenance is messy. Metadata can be stripped by routine workflows like re-uploads, platform processing, screenshots, and re-encoding. OpenAI’s own documentation on C2PA in ChatGPT images makes the point plainly. Metadata is helpful when it survives, and it can be removed accidentally or intentionally.

Researchers keep pointing out that watermark robustness is hard, and the arms race often favors removal tools and adversarial transformations. Nature’s reporting on the topic, in “AI watermarking must be watertight to be effective”, captures how difficult it is to make a watermark that survives real world editing.

The EFF’s critique is blunter. Watermarking sounds straightforward, but it is unlikely to curb disinformation in practice, especially against adversaries who want to evade it, as argued in EFF’s analysis of why AI watermarking will not curb disinformation.

The University of Waterloo publicized “UnMarker” as a way to show how AI image watermarks can be removed, and why they do not meaningfully defend against deepfakes. Their explainer, “Watermarks offer no defense against deepfakes,” reads like a warning label for policymakers who treat watermarking as a solved problem.

So you end up with the worst of both worlds. Professional users are penalized with degraded output, while sophisticated actors route around the regime.

When regulation “fixes” products by breaking them

Europeans have seen this movie before. Regulation that might be defensible in isolation metastasizes into user experience vandalism and capability loss.

Cookie banners are a perfect example. Whatever your views on privacy law, the lived experience is universal. Endless popups, consent fatigue, and a web that feels designed by compliance counsel. Even industry lawyers discuss “banner fatigue,” because everyone can see the result, as described by Osborne Clarke’s commentary on cookie banner fatigue.

Cars are another. The EU’s General Safety Regulation rollout made driver assistance systems mandatory for new vehicles sold from July 7, 2024, including Intelligent Speed Assistance. The Commission’s summary of the rollout is in its note on mandatory driver assistance systems.

Maybe you like these systems. Maybe you hate them. The structural point is that product design is increasingly downstream of regulatory checklists, not customer preference.

Now apply the same dynamic to AI. Provenance obligations and “harm” duties drive visible marking, aggressive refusals, and internal “values” alignment that has little to do with whether the tool helps you do your job.

The money adds insult to injury

Governments are not neutral spectators in the AI economy. They fund, subsidize, and politically bless large parts of the ecosystem.

The EU frames AI investment as a strategic priority inside Horizon Europe, its major research and innovation funding program. The Commission’s own description of Horizon Europe lays out the overall envelope and ambition.

Commission leadership has also touted massive AI investment ambitions in public statements. Reuters reported on that push in a story about a proposed boost for EU AI investment in its report on the EU’s AI push.

The UK’s policy direction also mixes encouragement with enforcement. The government’s publication “AI Opportunities Action Plan: One Year On” lays out how it frames AI as a national opportunity, including funding language around initiatives such as a “Sovereign AI Unit.”

When public money helps build these systems, throttling access and utility “for safety” becomes more than annoying. It becomes a governance problem. Taxpayers subsidize capability, then the same institutions demand that capability be padded, branded, and fenced off.

Compliance drift turns premium tools into demos

Put the pieces together and you get the product outcome that customers actually feel.

A visible watermark is one obvious downgrade. Another is the steady creep of “safety” refusals that block legitimate work because a compliance document somewhere treats your use case as risky.

The deeper issue is that centralized AI can be updated remotely, and not always in ways customers asked for. The model gets better, but the interface gets tighter. Capability rises, and utility falls.

You start paying for what the system could do, not what it will let you do.

The only durable exit is local first

If centralized AI keeps drifting toward compliance theater, begging for better guardrails rarely fixes the incentives. Reducing dependency does.

Local first can still include safety controls. The difference is ownership. You choose the constraints because you own the runtime.

The biggest advantage is compounding control. Nobody can remotely rewrite your tool into uselessness because an unelected body published a new guidance document.

Local video is heavier than local text, but open releases keep improving. Tencent’s HunyuanVideo repository is one example of how fast open video generation research is moving into public code.

None of this is as frictionless as a cloud subscription. But the trade is straightforward. In exchange for setup work, you get stability, predictability, and the ability to decide what “safe” means for your context.

Picking a strategy before the guardrails move again

If you rely on commercial AI today, you do not need to flip a switch overnight. You do need a plan.

Identify the workflows where output must be production ready. Those are the first places where visible watermarks and aggressive refusals create real cost.

Then decide what you want to control. Sometimes it is the model weights. Sometimes it is the moderation layer. Sometimes it is just the ability to run inference when a vendor policy changes.

Finally, treat provenance as a tool, not as theater. Use verification where it fits your risk profile, but do not accept blanket downgrades that exist mainly to satisfy political optics.

Centralized AI is drifting toward a future where capability exists but access is conditional and output is branded. Local first is the opposite. You keep the power, and you keep the option to say no.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing