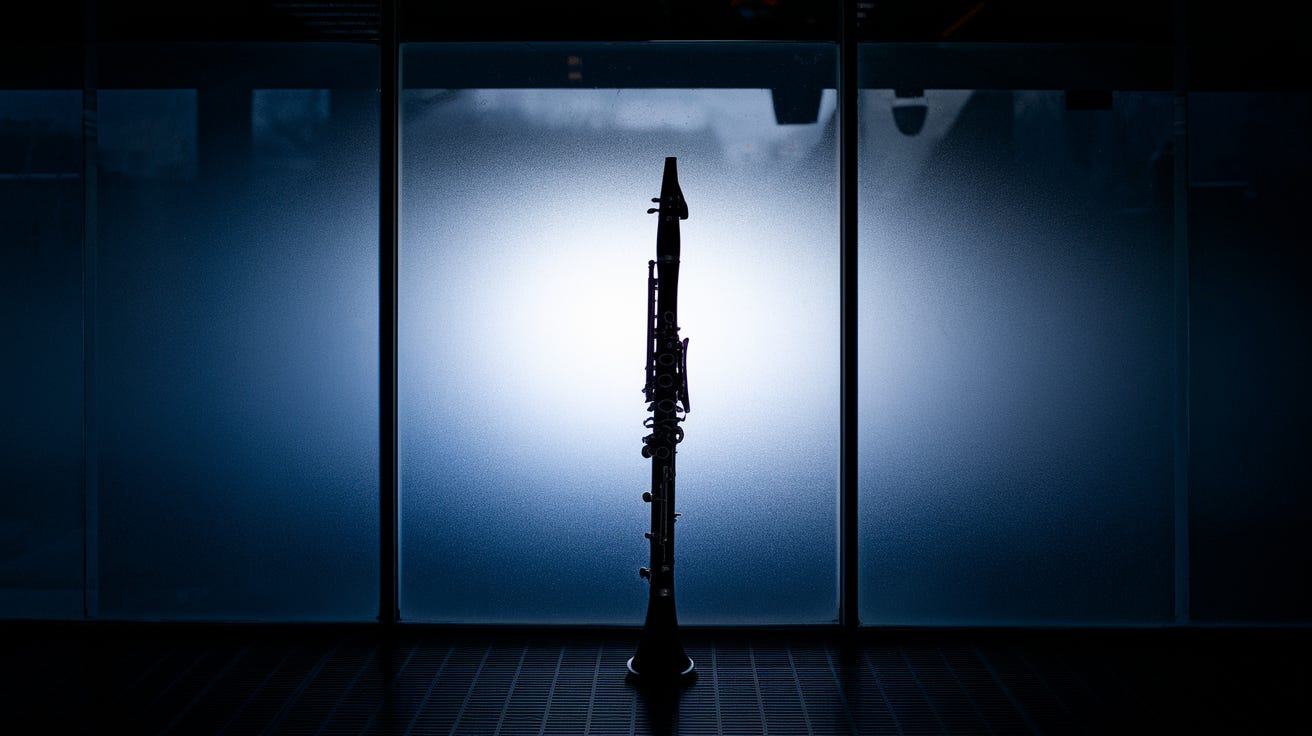

When AI thinks a clarinet is a gun

A Florida school locked down after AI flagged a clarinet as a gun. What this failure reveals about surveillance, false positives, and safety theater.

A Florida classroom became the latest stage for a very modern kind of panic: a school-wide “code red” lockdown triggered by an AI weapon-detection system that confused a clarinet for a firearm.

If that sounds absurd, it should. If it sounds familiar, that is the point.

This is what happens when we swap human judgment for algorithmic certainty and then act surprised when the machine gets it wrong.

When a music case becomes a threat

The pitch behind “AI for safety” is simple: constant vigilance without human error. Cameras watch every hallway, software flags danger in real time, and adults get to feel like they have finally purchased peace of mind.

In practice, you get moments like this one, where a student’s instrument case is treated like a rifle. The incident has already been folded into the growing catalog of high-profile AI failures tracked in tech coverage, including write-ups of the clarinet mix-up itself in an updated roundup of AI errors and mistakes (a list of recent AI errors and hallucinations).

A single false alert is not “just a glitch” when the response is armed police, terrified kids, and a community pushed through an adrenaline surge it did not need.

The price tag of automated suspicion

The district reportedly pays $250,000 a year for a subscription service that claims it can identify more than 100 types of weapons in real time. That is a lot of money for a system that could not reliably separate an everyday object from a deadly one.

False positives are not rare edge cases in this market. They are a predictable outcome when vendors optimize for catching everything that might be dangerous, even if it means flagging plenty of things that are not. If you want a sense of how often “smart” systems go sideways across industries, you can browse broader compilations of AI disasters and controversies that highlight recurring patterns, including overconfidence, poor testing, and real-world harms (examples of AI disasters across use cases).

The industry sells certainty. Schools end up buying paranoia.

Black boxes don’t earn trust

There is another problem that does not show up on the invoice. These tools are opaque by design.

Parents and teachers cannot see the training data. They cannot audit what the model “learned.” They cannot evaluate the threshold that turns “maybe” into “lock the doors.” When a system flags an object, the explanation often boils down to: trust us.

That posture is dangerous in any setting. In a school, it is corrosive. Even professional risk and governance communities have been warning that AI programs fail in predictable ways when oversight is weak, accountability is unclear, and decision-makers treat automation as a substitute for controls and judgment (lessons on avoiding AI pitfalls and incidents).

If nobody can explain why the alarm went off, then nobody can convincingly promise it will not happen again.

“Objective” tech still carries human bias

When administrators rely on an algorithm to justify a lockdown, the decision can feel less emotional and more “data-driven.” That framing is seductive. It also masks the truth that these systems reflect the choices, assumptions, and blind spots of the people who build them.

Most weapon-detection models are tuned to maximize recall, which means they try to catch every possible threat. Precision suffers. Everyday objects become suspicious shapes. Edge cases become emergencies. And the public gradually adapts to a new normal where being watched is treated as the cost of participation.

This is not limited to schools. It fits into a wider trend of AI controversies that keep resurfacing across sectors as automation expands faster than accountability (a running overview of major AI controversies).

Reclaiming the right to be unobserved

The most common response to failures like this is “we need better AI.” That response misses what the clarinet lockdown actually reveals.

Schools do not need more opaque surveillance layered onto stressed staff and anxious communities. They need clear safety protocols that respect privacy, strengthen relationships, and keep humans responsible for judgment calls. They need procurement standards that demand transparency and independent evaluation, not marketing promises. They need a culture where safety does not automatically mean more monitoring.

Legal and policy watchers are already tracking how quickly AI deployments are expanding and how often they create new risks that institutions are not prepared to manage (a February 2026 roundup of AI developments). Schools should treat that pace as a warning, not an invitation.

Real security is built locally. It comes from attentive adults, trusted communities, and accountability that does not disappear behind a vendor contract. A $250,000 subscription that cannot tell the difference between Mozart and a magazine is not safety. It is expensive theater.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing