Why Gemini thinks your face belongs to a public figure

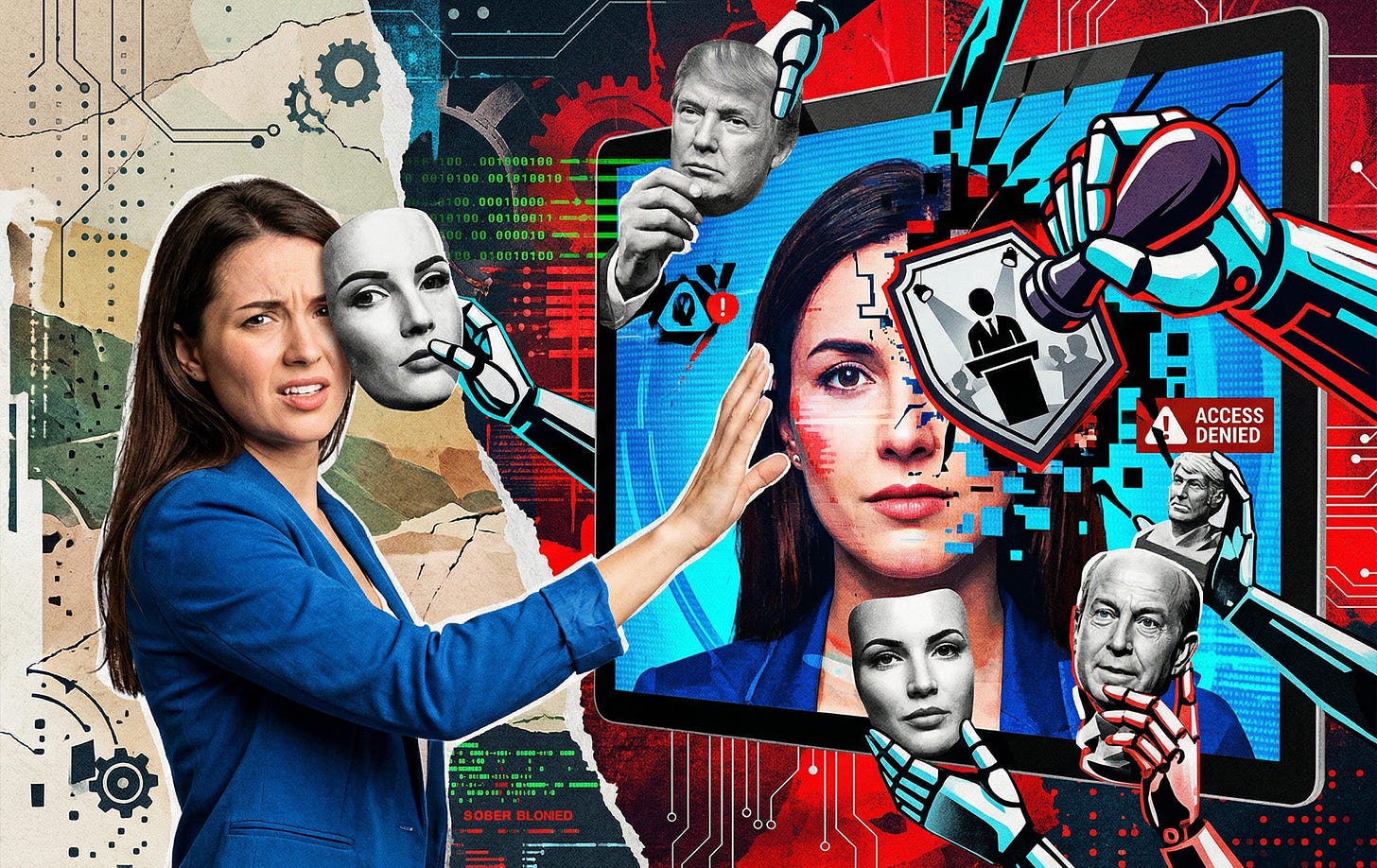

Gemini’s public figure error reveals a deeper problem in AI photo editing, where safety systems can block ordinary users from editing their own faces.

Google sold Gemini image editing as a personal photo tool. Upload a selfie, swap outfits, change the background, place yourself somewhere new, and the system should keep your face looking like you. That was the core promise in Google’s August 26, 2025 announcement for the upgraded Gemini image editor, which leaned heavily on likeness preservation for images of yourself, friends, family, and pets.

By late 2025 and into early 2026, though, users started describing the opposite experience. Instead of editing their own photos, Gemini was refusing them because it decided the image involved a public figure. That failure cuts straight against Google’s pitch. It also says something important about where mainstream AI image editing is heading: toward tools that can do remarkable things when they work, but sit behind increasingly aggressive safety systems that can misread an ordinary face as restricted content.

This matters because it is not a niche annoyance. When a product is marketed around everyday photo edits and then rejects normal users trying to edit their own selfies, the issue is bigger than one bad prompt. It becomes a trust problem, a workflow problem, and a preview of how centralized AI tools handle identity, risk, and control.

More on Google Gemini

Google promised an editor that would keep your likeness intact

The promise was easy to understand. In Google’s own launch post for Gemini image editing, the company framed the update around maintaining a person’s likeness across edits. The messaging was aimed at ordinary people, not power users or researchers. You could upload a photo of yourself, ask for a new outfit, blend images together, or drop yourself into a new setting, and Gemini would try to preserve the face and overall identity that made the image feel personal.

That is a strong product promise because people notice tiny errors in faces immediately. A scenic background can be off by a little and still look fine. A face cannot. If a model warps the eyes, changes the jawline, or smooths someone into a generic AI person, the result breaks fast. Google clearly understood that. The marketing around the upgrade made likeness preservation sound like one of the main reasons to use the feature in the first place.

That is exactly why the public figure refusals land so badly. The failure is not happening at the edges of the experience. It is colliding with the center of the product story. A tool that is supposed to preserve your identity in edits is, in some cases, refusing to work because it thinks your identity belongs to somebody it is not allowed to touch.

The user reports match Google’s own warning about false positives

The public trail is real enough to take seriously. A Google Gemini community thread about the public figure error shows users describing exactly this problem when trying to edit their own photos. These are user reports, not polished Google bug notes, but they fit a pattern that has surfaced often enough to matter.

What makes those reports more credible is that Google’s Gemini overview already acknowledges the broader class of failure. Google says Gemini can produce false positives and false negatives. A false positive, in plain language, is when the system blocks something reasonable because it mistakenly reads it as unsafe or inappropriate. The same overview also explains that candidate responses go through safety checks before users see them.

That combination matters. Once you know Google expects false positives and once you know outputs pass through a safety layer before delivery, the public figure problem starts to look less like a weird mystery and more like an expected byproduct of the way the product is built. The model may be perfectly capable of doing the edit. The user may be making a benign request. The block can still happen because something in the screening layer decides the image is too risky to return.

That distinction is important for readers trying to understand what is going wrong. The visible failure is an editing refusal. The underlying issue may have less to do with raw image generation quality and more to do with a safety system that is tuned to be conservative around identity.

The most likely explanation is a conservative identity safety layer

Google has not published a neat diagram that says, here is the exact public figure detection pipeline used inside Gemini image editing. So any explanation of the mechanism has to be framed carefully. Still, the available evidence points in a clear direction.

The Gemini 3.1 Flash Image model card makes clear that Google’s image stack accepts image inputs and is evaluated on tasks such as character editing and multi image workflows. Google also says these systems are developed with safety processes, red teaming, and ongoing work to reduce false positives and false negatives. That is enough to sketch the broad shape of the system even if Google does not expose every internal component.

The most plausible explanation is that Gemini image editing is not guarded by a precise celebrity detector with perfect certainty. It is more likely guarded by a safety layer that evaluates identity similarity and asks whether the uploaded face or generated result appears too close to a protected person or another restricted category. That is inference, not formal documentation, but it fits the combination of capabilities Google advertises and the safety architecture Google describes.

Once you look at it that way, the public figure error becomes easier to understand. A user uploads a selfie. Gemini can probably make the requested edit. Before the result is shown, another layer checks whether the face is too close to something the platform does not want edited. If that layer is tuned conservatively, it can choose to block an ordinary face rather than risk letting through an edit of a celebrity, politician, or other public figure.

For a company like Google, that is an understandable trade. It is also a frustrating one for users. The safer threshold from Google’s perspective can feel absurd from the user’s perspective, especially when the user is looking at their own face and being told the platform does not trust it.

Open-set face matching is exactly where false positives show up

This is where the face recognition literature becomes useful, even if Google has not confirmed the exact implementation. The core challenge looks a lot like an open-set identity problem. In plain English, that means a system is not merely asking whether two known photos match. It is asking whether a face belongs to anyone in a restricted gallery or whether it should be treated as unknown.

That is a much harder problem than basic one to one matching, and NIST’s face recognition evaluation work helps explain why. NIST describes false positives as incorrect associations between different people and notes that they happen because different faces can be anatomically similar, even in high quality frontal images. NIST also points out that false positive identification rates rise in one to many searches as gallery size increases.

That last point is the ugly math behind this whole mess. If a safety layer is effectively checking whether your face looks too much like anyone in a do not edit list, a bigger restricted gallery means more chances for a mistaken match. The more names a platform is worried about, the more ordinary people can get swept into the wrong bucket.

The asymmetry makes the problem worse. One false negative, where a real public figure slips past the filter, can create a reputational crisis, policy blowback, or legal trouble. A batch of false positives, where ordinary users cannot edit their own selfies, usually produces scattered complaints, support threads, and frustration. That is a very different cost structure, and it pushes large platforms toward overblocking.

NIST also notes that false positive behavior can vary across demographic groups because score distributions shift across sex, age, and region-of-birth groupings. That does not prove Gemini’s public figure refusals fall unevenly across different groups. It does mean that any face matching style safety layer carries that risk. When platforms deploy identity screening at scale, bias concerns do not disappear just because the system is wrapped in the language of safety.

Google has strong reasons to block too much instead of too little

Google’s incentives here are not hard to read. The company has already shown a willingness to tighten safety aggressively after a public failure. In Google’s 2024 explanation of the Gemini image generation controversy, the company acknowledged that the system had become more cautious than intended and was handling some benign prompts inappropriately. That episode matters because it shows precedent. When Google gets burned by an undercooked safety decision, the next version is likely to be stricter.

There is also a real abuse case behind the strictness. Google DeepMind’s research on the misuse of generative AI identifies impersonation and the creation of realistic human likenesses as important misuse patterns. On top of that, Google’s generative AI prohibited use policy restricts uses involving non-consensual intimate content and certain forms of biometric or personal data misuse. A large consumer platform does not need many reminders to treat photorealistic identity editing as a high risk area.

Seen through that lens, the public figure error is not random. It is the predictable byproduct of a platform trying to protect itself against celebrity deepfakes, impersonation, and harmful synthetic media. Google has legal, reputational, and policy reasons to prefer a system that blocks too much over one that blocks too little.

The problem is that the cost of this trade lands on ordinary users first. When a company overblocks, the company gets cover. The user gets friction. A person trying to preview a haircut, try on a different outfit, or change a background is now stuck negotiating with a safety gate that may treat their own face like restricted property.

This bug says a lot about the future of AI photo editing

The bigger story here is about control. AI photo editing is becoming more powerful, but it is also becoming more mediated. The promise is creative freedom. The reality is often a layered system where model capability, policy rules, and platform risk management all sit between the user and the final image.

That is why this issue matters beyond Gemini. If mainstream image editors are built around likeness preservation, real world knowledge, and centralized safety review, then identity disputes will become a normal part of the product experience. The more a platform can recognize a face, the more a platform will also feel pressure to restrict what can be done with that face. Sometimes that protects people. Sometimes it blocks the wrong person.

In theory, that sounds manageable. In practice, it can make ordinary workflows brittle. A user does not know which edit will trip the filter. They do not know whether the block came from the text prompt, the uploaded image, the generated result, or a hidden similarity score. They do not know whether retrying with different wording will help. They are left guessing, even when the request is harmless.

That opacity is one reason these failures feel so maddening. The platform does not merely say no. It says no without showing the real threshold, the real classifier, or the real reason a private face was judged too close to a protected one.

What to do if Gemini flags your own face as a public figure

The first move is simple and unglamorous. Report it properly. Google’s own Gemini feedback and problem reporting page explains how to send feedback and include the relevant prompt or files. If Gemini refuses a benign edit of your own photo, save the original image, copy the exact prompt, keep the exact refusal text, and state plainly that the person in the image is you or another private individual, not a public figure.

It also helps to simplify the request. Use one face rather than multiple people. Ask for one change at a time. Stick to ordinary edits like changing a shirt color, swapping a background, or adjusting lighting. Avoid anything that references celebrities, famous styles strongly associated with a person, or magazine language that could make the prompt look like identity imitation. Google does not publish these steps as official fixes, but they follow the usual logic of false positive safety systems. The less ambiguity you create, the less work the filter has to do.

That still may not be enough if the problem sits in the image itself rather than the prompt. A face that lands near the wrong threshold can keep triggering the same refusal even when the requested edit is harmless. That is why serious users should think carefully before building an important self photo workflow around a hosted tool they do not control.

Why local and more open tools matter when this happens

If you need dependable control over your own images, the practical answer may be to move at least part of the workflow off Gemini. The sourced example in the original reporting is FLUX.1 Kontext Dev through ComfyUI, which gives users a more direct editing workflow and, in the right setup, more control over how images are handled. Tools like Qwen-Image-Edit or Fooocus may also appeal to people who want a less locked down experience, though the core point is broader than any one model.

Local or more open workflows are not automatically better at everything. They can be harder to set up. They can produce rough results. They can still fail. What they change is the locus of control. You are less dependent on a remote platform’s hidden risk thresholds, product policies, and silent classifier updates.

That matters because hosted tools can shift overnight. A workflow that works today can tighten tomorrow if the provider adjusts safety rules after a policy review, a press cycle, or a misuse incident. With a local stack, the tradeoffs are more visible. You bear more responsibility, but you also know who is making the call.

For anyone doing regular self portrait editing, avatar creation, fashion mockups, or profile image work, that difference is no longer abstract. The public figure error is a reminder that convenience can come with a hard ceiling. Once a platform decides your request falls on the wrong side of its risk model, your choices narrow fast.

The public figure error is not a quirky edge case

It is tempting to dismiss this as one of those AI glitches that will get ironed out quietly. That reading is too generous. Gemini telling users that their own faces resemble public figures closely enough to block an edit is a predictable failure mode for a centralized system trying to solve a hard identity screening problem under intense safety pressure.

Google’s own public materials already contain the ingredients. There is product marketing built around preserving a person’s likeness. There is a Gemini overview that acknowledges false positives and safety checks. There is a model card that describes safety evaluation and continued work on reducing both false positives and false negatives. There is misuse research pointing to impersonation risks. There is a policy framework that treats biometric and intimate image misuse as serious problems. Once you put those together, the outcome is not surprising. The surprise is that the product promise and the safety posture were always going to collide somewhere this obvious.

For readers, the practical conclusion is straightforward. If Gemini works for light edits, use it. If it starts hallucinating celebrity status onto your own face, do not treat that as a harmless hiccup. Treat it as a sign that the platform’s incentives are no longer aligned with your workflow. Report the bug, document the refusal, and keep a backup plan that you actually control.

The real lesson is bigger than one Gemini bug report. AI image editing is moving toward a world where the best consumer tools may also be the most aggressively managed. When that happens, the question stops being whether the model can make the image you want. The question becomes whether the platform will let you.

Explore more from Popular AI:

Start here | Local AI | Fixes & guides | Builds & gear | AI briefing

Gemini image editing gets a lot less useful when likeness preservation collides with false public figure flags, because that turns an everyday selfie edit into a trust and control problem. Has anyone here run into this kind of Gemini refusal, and do issues like this push you toward local AI image tools instead?